Bias in AI Models

Types of Bias, Sources, and Mitigation Strategies

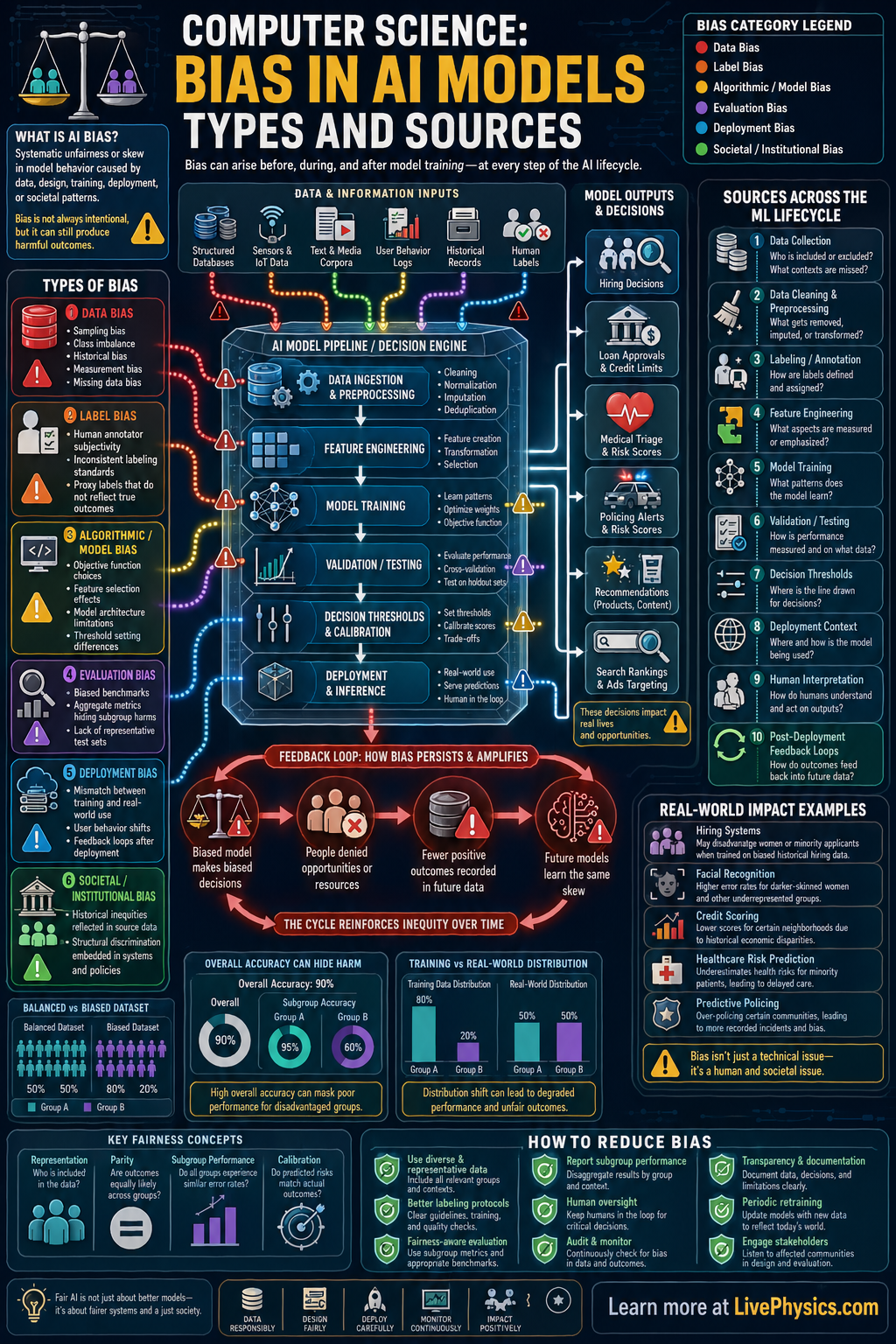

Bias in AI models happens when a system produces unfair, systematically skewed, or less accurate results for some groups, situations, or outcomes. This matters because AI is used in hiring, lending, healthcare, policing, education, and online platforms where decisions can affect real lives. A biased model can reinforce existing inequalities even if it appears mathematically sophisticated. Understanding where bias comes from helps students evaluate AI systems more critically and design better ones.

Bias can enter at many stages of the machine learning pipeline, including data collection, labeling, feature selection, model training, evaluation, and deployment. If the training data are unrepresentative, the model may learn patterns that do not generalize fairly. If labels reflect human prejudice or measurement errors, the model can encode those distortions. Even after training, feedback loops and changing real-world conditions can create new bias over time, so bias is both a technical and social issue.

Key Facts

- Dataset bias occurs when P_train(x, y) != P_real(x, y), so the training data do not match the real population.

- Sampling bias happens when some groups are overrepresented or underrepresented in the dataset used for training.

- Label bias occurs when the target value y contains human judgment errors, historical prejudice, or inconsistent annotation.

- Measurement bias appears when features x are recorded differently across groups, making the same variable mean different things.

- A model can have high overall accuracy = correct predictions / total predictions while still performing poorly for a subgroup.

- Feedback loop bias happens when model outputs change future data, so predictions influence the next round of training data.

Vocabulary

- Sampling bias

- Sampling bias is a distortion caused by collecting data that do not fairly represent the full population.

- Label bias

- Label bias is error or unfairness in the target values used to train a model.

- Feature

- A feature is a measurable input variable the model uses to make a prediction.

- Distribution shift

- Distribution shift is a change between the data a model was trained on and the data it sees later in use.

- Fairness metric

- A fairness metric is a quantitative measure used to compare model behavior across different groups.

Common Mistakes to Avoid

- Assuming high accuracy means the model is fair, because overall performance can hide large errors for smaller subgroups. Always check results by group, not just one summary score.

- Treating biased historical data as objective truth, because past decisions may already reflect discrimination or unequal access. A model trained on such data can repeat those patterns.

- Ignoring how labels were created, because human annotators, proxies, or institutional records may introduce systematic error. Investigate whether the target variable truly measures what you want.

- Testing only once before deployment, because real-world data and user behavior change over time. Monitor models after release to detect drift and new sources of bias.

Practice Questions

- 1 A hiring model is trained on 1000 past applications. Of these, 800 are from Group A and 200 are from Group B, while the real applicant pool is 50 percent Group A and 50 percent Group B. Identify the type of bias and calculate the percentage representation of each group in the training data.

- 2 A medical screening model has 90 correct predictions out of 100 cases for Group A and 30 correct predictions out of 50 cases for Group B. Calculate the accuracy for each group and state whether overall accuracy alone would hide a fairness problem.

- 3 A school uses an AI system to predict student success based partly on past disciplinary records. Explain how historical bias in those records could create label or feature bias in the model and affect future decisions.