Big-O Complexity Cheat Sheet

Time and Space Complexity of Common Algorithms

Related Tools

Related Labs

Related Worksheets

Related Cheat Sheets

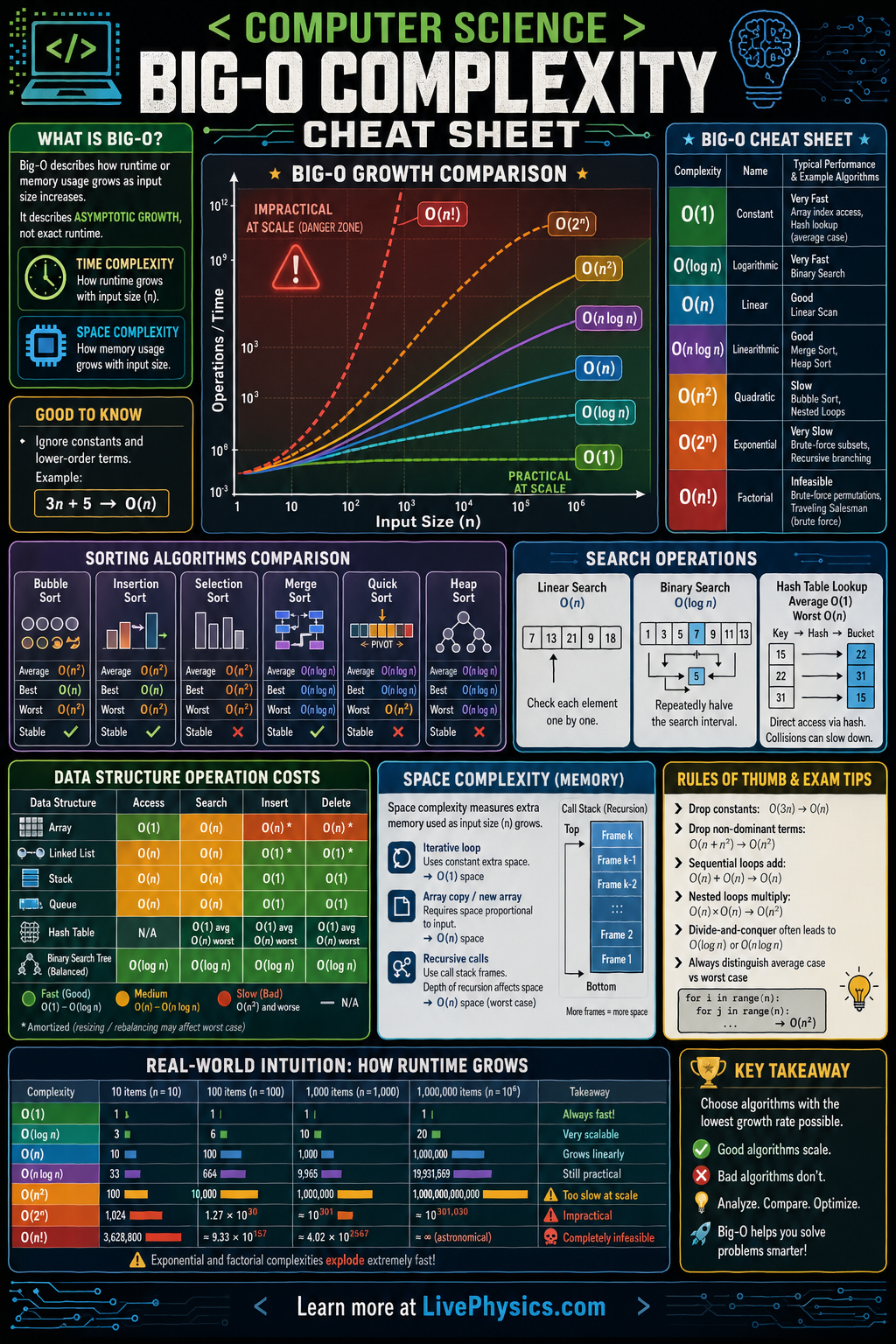

Big-O notation describes how the running time or space requirement of an algorithm grows as the input size n increases. It expresses an upper bound on the growth rate, ignoring constant factors and lower-order terms - only the dominant term matters as n becomes large. O(1) (constant) is the best case: execution time doesn't change regardless of input size. O(n) (linear) doubles when input doubles. O(n²) (quadratic) quadruples when input doubles.

Understanding Big-O lets you compare algorithms objectively and predict performance at scale. An O(n log n) sort like Merge Sort will always outperform an O(n²) sort like Bubble Sort for large datasets, even if the latter has smaller constant factors. Space complexity applies the same notation to memory usage. The distinction between worst-case, average-case, and best-case complexity is also important: Quick Sort is O(n log n) on average but O(n²) in the worst case with a bad pivot choice.

Key Facts

- O(1): constant time - array index lookup, hash table access

- O(log n): halves the problem each step - binary search, balanced BST operations

- O(n): linear - linear search, single-pass array traversal

- O(n log n): divide-and-conquer sorts - Merge Sort, Heap Sort, Quick Sort (average)

- O(n²): nested loops - Bubble Sort, Insertion Sort, Selection Sort

- O(2ⁿ): exponential - brute-force subset enumeration; impractical for n > ~30

Vocabulary

- Big-O notation

- A mathematical notation describing the upper bound on an algorithm's growth rate, expressed as a function of input size n.

- Time complexity

- The number of basic operations an algorithm performs as a function of input size; measured in Big-O notation.

- Space complexity

- The amount of memory an algorithm uses as a function of input size, including both auxiliary and input storage.

- Worst-case complexity

- The maximum running time of an algorithm over all possible inputs of size n; what Big-O typically expresses.

- Amortized complexity

- The average time per operation over a sequence of operations, allowing some operations to be more expensive than others (e.g. dynamic array resizing).

Common Mistakes to Avoid

- Ignoring constant factors and lower-order terms. O(2n) and O(n) are the same class - Big-O drops constants. But in practice, constant factors matter at small n; use profiling for real optimization.

- Assuming a better Big-O always means faster in practice. An O(n log n) algorithm with high constant overhead can be slower than an O(n²) algorithm for small n. Profiling beats theoretical analysis for small inputs.

- Confusing worst-case with average-case. Quick Sort is O(n²) worst case but O(n log n) average case. Always specify which case you mean.

- Forgetting space complexity when evaluating algorithms. Merge Sort is O(n log n) time but O(n) space. In memory-constrained environments, an in-place O(n²) sort may be preferable.

Practice Questions

- 1 What is the Big-O time complexity of finding an element in a sorted array using binary search? Explain why.

- 2 Nested loops each running n times give O(n²). What is the complexity of three nested loops each running n times, and give an example algorithm with this complexity.

- 3 Quick Sort has O(n²) worst-case but O(n log n) average-case complexity. Describe the worst-case input that triggers O(n²) behavior.