LLM vs Traditional NLP Models

Transformers vs Older Pipelines - BERT, RNNs, and Rule-Based NLP

Related Tools

Related Labs

Related Worksheets

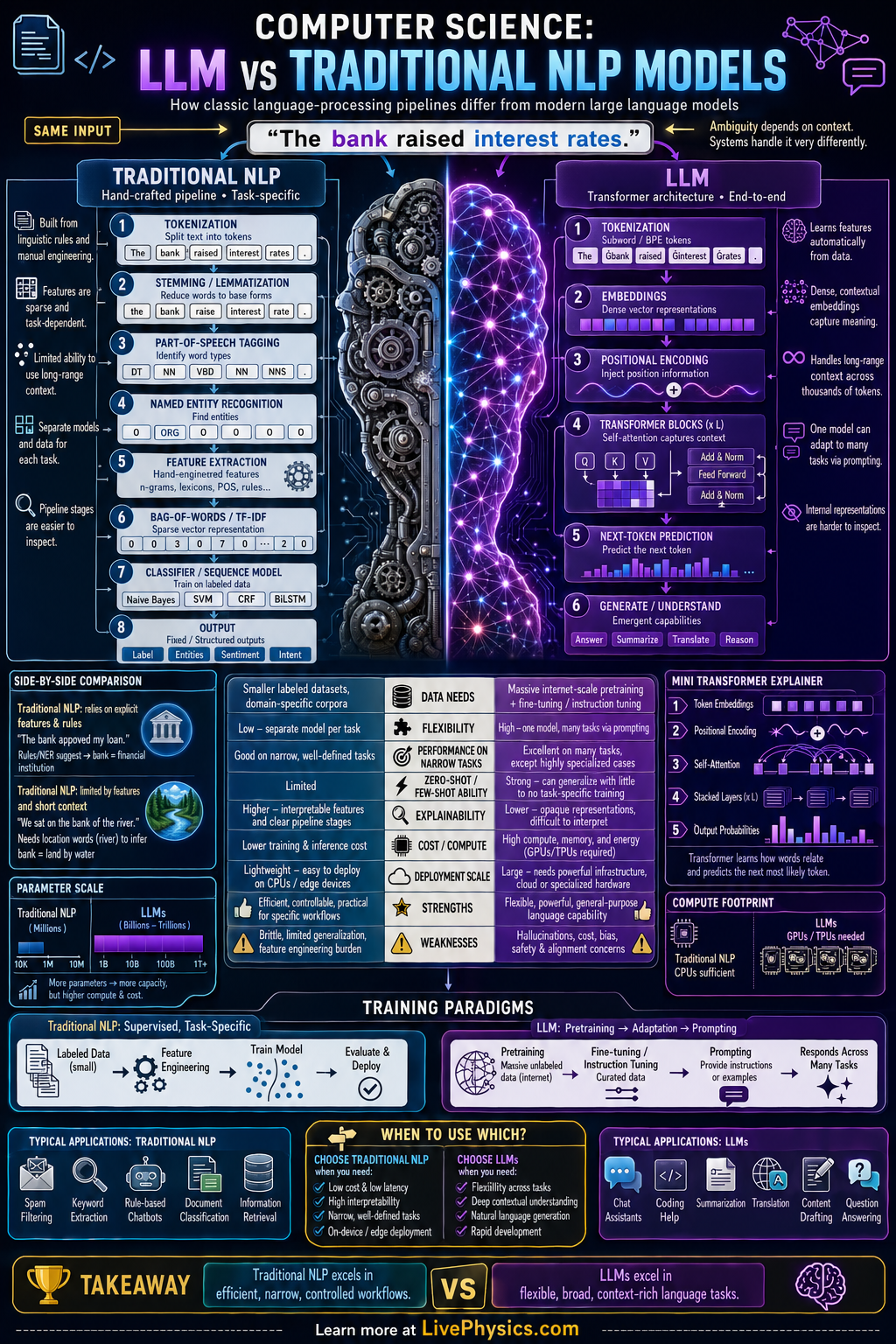

Natural language processing has changed from systems built from many separate hand designed steps to large models that learn broad language patterns from massive text data. Traditional NLP models often rely on tokenization, feature extraction, and task specific classifiers, while LLMs use deep neural networks trained end to end. This comparison matters because the two approaches differ in flexibility, data needs, interpretability, and cost. Understanding both helps students see why modern AI systems can generate fluent text but also why older methods are still useful in many applications.

Traditional NLP usually breaks language tasks into modules such as preprocessing, part of speech tagging, parsing, and classification. LLMs instead learn distributed representations with billions of parameters, often using the transformer architecture and self attention to model context across long sequences. In practice, traditional models can be faster, cheaper, and easier to debug for narrow tasks, while LLMs can adapt to summarization, translation, question answering, and coding with little or no task specific redesign. The tradeoff is that LLMs demand far more computation, larger datasets, and careful evaluation for bias, hallucination, and reliability.

Key Facts

- Traditional NLP often follows pipeline processing: text -> tokenize -> features -> model -> output.

- A classic linear text classifier may use y = sign(w·x + b), where x is a feature vector such as word counts or TF-IDF values.

- LLMs commonly use transformers with self attention: Attention(Q,K,V) = softmax(QK^T / sqrt(d_k))V.

- Traditional NLP usually needs task specific features, while LLMs learn general representations from pretraining on large corpora.

- Model size differs greatly: traditional models may have thousands to millions of parameters, while LLMs often have billions or more.

- Training cost scales with data and model size, and inference cost is often much higher for LLMs than for simpler NLP models.

Vocabulary

- Traditional NLP

- An approach to language processing that uses separate steps and often hand designed features for specific tasks.

- Large Language Model

- A very large neural network trained on huge text datasets to predict and generate language across many tasks.

- Transformer

- A neural network architecture that processes sequences using attention instead of only step by step recurrence.

- Feature Extraction

- The process of converting raw text into measurable inputs such as word counts, n-grams, or embeddings.

- Self Attention

- A mechanism that lets a model weigh how strongly each word in a sequence relates to other words in the same sequence.

Common Mistakes to Avoid

- Assuming LLMs completely replace traditional NLP, which is wrong because simpler models can still be better for narrow tasks with limited data, strict latency, or low cost requirements.

- Thinking traditional NLP does not use learning, which is wrong because many classic systems include statistical models such as logistic regression, hidden Markov models, and support vector machines.

- Treating fluent output from an LLM as proof of correctness, which is wrong because language models can generate confident but false statements and must be checked against evidence.

- Ignoring computational cost when comparing models, which is wrong because accuracy alone does not capture memory use, training time, inference speed, and deployment constraints.

Practice Questions

- 1 A traditional spam classifier uses y = sign(w·x + b) with w = [0.8, -0.3, 0.5], x = [2, 1, 3], and b = -1. Compute w·x + b and determine the predicted class sign.

- 2 A self attention layer has d_k = 16 and for one query-key pair QK^T = 8. Compute the scaled score QK^T / sqrt(d_k) before the softmax step.

- 3 A company needs a language system for a fixed task with small labeled data, strict response time limits, and easy debugging. Explain whether a traditional NLP model or an LLM is more appropriate and why.