Memory in AI Systems

Short-Term vs Long-Term, In-Context vs External

Related Tools

Related Labs

Related Worksheets

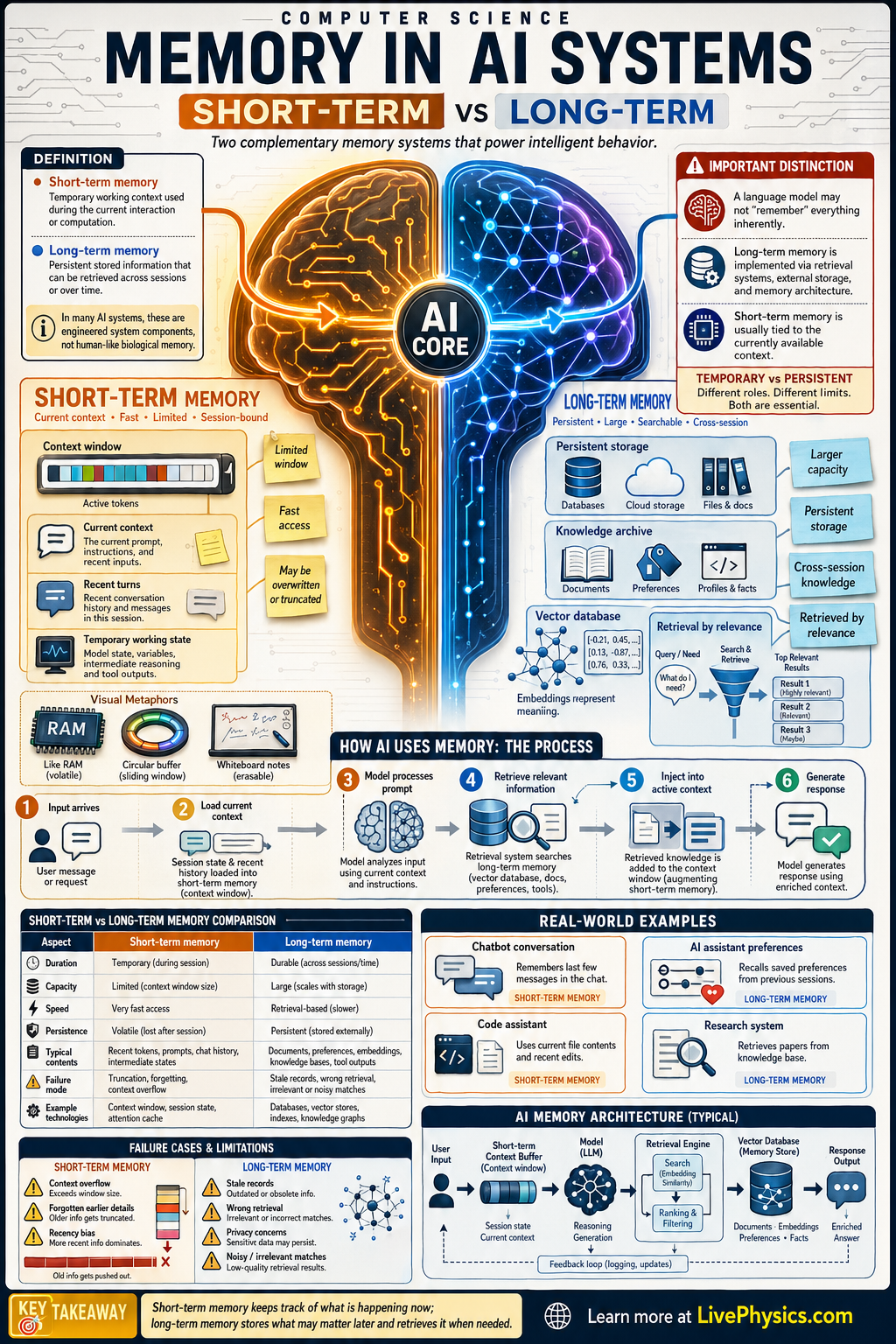

Memory is a core idea in AI systems because an intelligent system must use information from the present while also benefiting from information stored from the past. Short-term memory helps an AI keep track of the current conversation, recent tokens, or immediate task state. Long-term memory lets the system retain facts, user preferences, and learned patterns across longer periods. Understanding the difference helps explain why some AI tools feel context-aware in the moment while others can improve over time.

Short-term memory is usually limited, fast, and tied to the current processing window, such as a model's context window or temporary hidden state. Long-term memory is more persistent and may be stored in databases, vector stores, model weights, or external knowledge systems. In practice, many AI systems combine both types so they can reason about the current input while retrieving older relevant information. This balance affects accuracy, personalization, efficiency, and the risk of forgetting or using outdated data.

Key Facts

- Short-term memory stores recent information needed for the current task, often within a limited context window.

- Long-term memory stores information across sessions, such as learned parameters, saved documents, or user profiles.

- A transformer's attention cost grows roughly as O(n^2) with sequence length n, which limits short-term context size.

- A simple memory update can be written as M_t = f(M_(t-1), x_t), where x_t is new input and M_t is the updated memory.

- Retrieval systems often rank stored items by similarity, for example sim(q, d) = (q · d) / (|q||d|).

- Good AI design often uses short-term memory for active reasoning and long-term memory for retrieval, adaptation, and persistence.

Vocabulary

- Short-term memory

- Temporary information storage that an AI system uses during the current task or interaction.

- Long-term memory

- Persistent information storage that remains available beyond the current session or computation.

- Context window

- The amount of recent input a model can directly process at one time.

- Embedding

- A numerical vector representation of data that helps an AI compare meaning or similarity.

- Retrieval

- The process of finding and bringing back relevant stored information for current use.

Common Mistakes to Avoid

- Assuming short-term memory and long-term memory are the same thing, which is wrong because one is temporary active context and the other is persistent storage across time.

- Believing a larger context window means true long-term memory, which is wrong because more immediate context still disappears when the session ends unless it is stored elsewhere.

- Thinking model weights are the only form of long-term memory, which is wrong because AI systems can also use databases, logs, vector stores, and external documents.

- Ignoring memory retrieval quality, which is wrong because storing information is not enough if the system cannot find the right item at the right time.

Practice Questions

- 1 An AI chatbot can read 8000 tokens at once. A conversation has 10500 tokens. How many tokens are outside the current context window if the model only keeps the most recent 8000 tokens?

- 2 A retrieval system compares a query to 120 stored documents and returns the top 5 most similar. What percentage of the stored documents are returned?

- 3 A tutoring AI remembers the current problem steps during one session but forgets the student's preferred learning style the next day. Explain which part of memory worked, which part failed, and what system change could fix it.