How GPT Models Generate Text

Autoregressive Decoding, Temperature, Top-p, and Sampling

Related Tools

Related Labs

Related Worksheets

Related Cheat Sheets

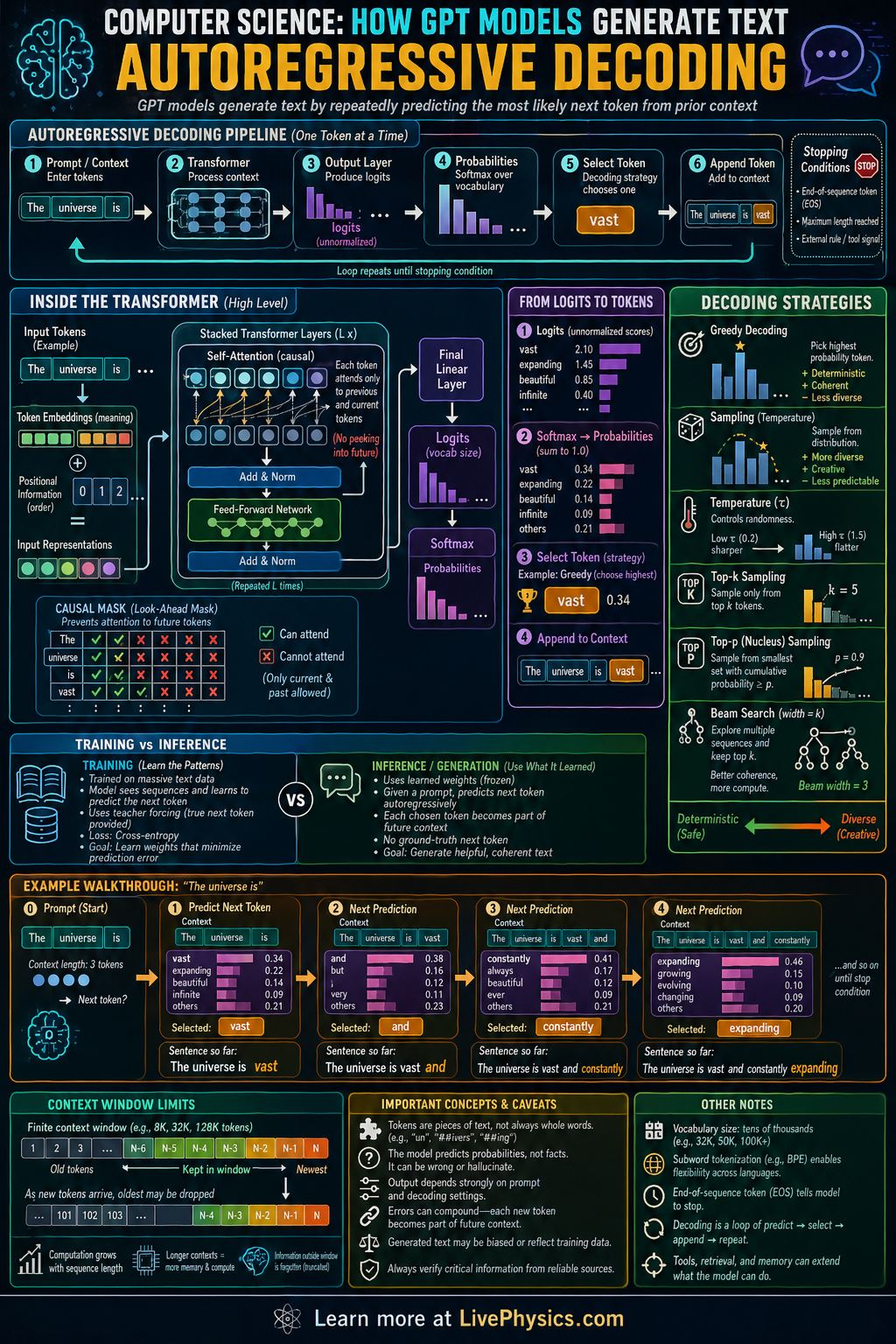

Autoregressive decoding is the step by step process GPT style language models use to generate text. Instead of writing a whole sentence at once, the model predicts one token, adds it to the context, and then predicts the next token. This matters because each new choice depends on everything that came before it, which lets the model produce coherent paragraphs, code, and dialogue. The method is simple in idea but powerful in practice because repeated next token prediction can build long and complex outputs.

Inside the model, the current token sequence is converted into vectors and processed by transformer layers that use self attention to combine information from earlier positions. The model outputs logits for every possible next token, then these scores are turned into probabilities with the softmax function. A decoding rule such as greedy choice, sampling, top k, or nucleus sampling selects the next token from that distribution. The chosen token is appended to the sequence, and the loop repeats until a stop token is produced or a length limit is reached.

Key Facts

- Autoregressive factorization writes sequence probability as P(x1, x2, ..., xn) = product from t = 1 to n of P(xt | x1, ..., x(t-1)).

- At each step, the model computes logits z_i for each token in the vocabulary.

- Softmax converts logits to probabilities: P(token i) = e^(z_i) / sum over j of e^(z_j).

- Greedy decoding picks the highest probability token: x_next = argmax_i P(token i).

- Temperature rescales logits before softmax: P(token i) = e^(z_i/T) / sum over j of e^(z_j/T).

- The generation loop is context -> model -> probabilities -> token choice -> append token -> repeat.

Vocabulary

- Token

- A token is a basic unit of text used by the model, such as a word, subword, punctuation mark, or symbol.

- Logit

- A logit is an unnormalized score the model assigns to each possible next token before probabilities are computed.

- Softmax

- Softmax is a function that turns a list of logits into probabilities that add up to 1.

- Self attention

- Self attention is the mechanism that lets each position in the sequence use information from earlier tokens when forming its representation.

- Decoding

- Decoding is the procedure for choosing the next token from the model's predicted probability distribution.

Common Mistakes to Avoid

- Assuming the model generates an entire sentence in one pass, which is wrong because autoregressive decoding predicts one token at a time and updates the context after each choice.

- Treating logits as probabilities, which is wrong because logits can be any real numbers and must be passed through softmax to become a valid probability distribution.

- Thinking greedy decoding and sampling are the same, which is wrong because greedy always takes the highest probability token while sampling can choose lower probability tokens to increase variety.

- Ignoring the effect of temperature, which is wrong because changing T reshapes the probability distribution and can make output more deterministic or more random.

Practice Questions

- 1 A model assigns logits [2.0, 1.0, 0.0] to three possible next tokens A, B, and C. Using softmax, compute the probability of each token to three decimal places.

- 2 A model predicts next token probabilities: cat 0.50, dog 0.30, runs 0.20. Under greedy decoding, which token is chosen? If top k sampling uses k = 2, which tokens remain eligible for selection?

- 3 Explain why the sentence generated after 20 decoding steps can change if the model chooses a different token at step 3, even when all later model weights stay the same.