How Machine Learning Works

Training vs Inference, Loss Functions, and Generalization

Related Tools

Related Labs

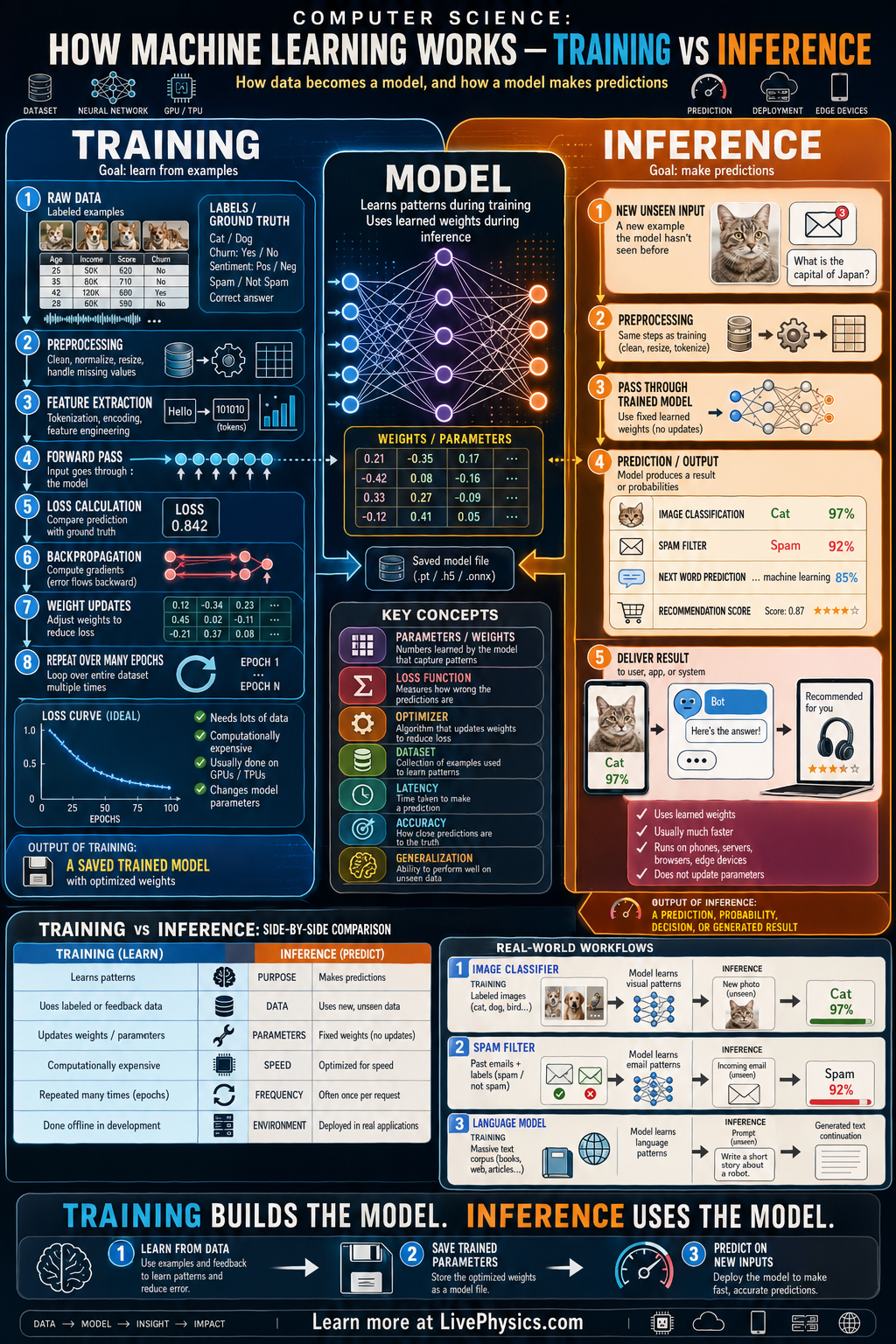

Machine learning systems usually operate in two distinct phases: training and inference. During training, a model learns patterns from data by adjusting internal parameters to reduce error. During inference, that trained model is used to make predictions on new inputs quickly and consistently. Understanding the difference matters because the computing cost, data needs, and goals of each phase are very different.

In training, examples with known answers are fed into the model, a loss is computed, and optimization updates the weights. This process may require many passes through large datasets and often uses powerful hardware such as GPUs. In inference, the weights are fixed, and the model performs a forward pass to produce an output such as a label, number, or generated text. Real applications depend on balancing training quality with inference speed, memory use, and reliability.

Key Facts

- Training updates model parameters to minimize loss; inference uses fixed parameters to compute outputs.

- A basic update rule is w_new = w_old - eta(dL/dw), where eta is the learning rate.

- Loss measures prediction error, for example MSE = (1/n) sum((y_pred - y_true)^2).

- One epoch = one full pass through the training dataset.

- Inference usually requires only a forward pass: output = f(x; w), with no weight updates.

- Training is typically slower and more compute intensive than inference because backpropagation computes gradients for many parameters.

Vocabulary

- Model

- A mathematical system that maps inputs to outputs using learned parameters.

- Parameter

- A value inside the model, such as a weight, that is adjusted during training.

- Loss function

- A formula that measures how far the model's prediction is from the correct answer.

- Backpropagation

- An algorithm that computes how each parameter affects the loss so the model can be updated.

- Inference

- The stage where a trained model takes a new input and produces a prediction without changing its parameters.

Common Mistakes to Avoid

- Thinking training and inference are the same process, which is wrong because training changes weights while inference uses already learned weights.

- Assuming a model keeps learning during normal prediction, which is wrong because standard inference does not update parameters unless a separate training step is run.

- Ignoring the cost of backpropagation, which is wrong because training needs extra memory and computation to store activations and gradients.

- Believing high training accuracy guarantees real-world performance, which is wrong because a model can overfit and perform poorly on new unseen data.

Practice Questions

- 1 A model has 500,000 parameters. During one training step, each parameter is updated once using gradient descent. If one epoch contains 200 steps, how many parameter updates occur in one epoch in total?

- 2 A regression model predicts y_pred = 7 for a true value y_true = 10. Using squared error for one example, L = (y_pred - y_true)^2, what is the loss?

- 3 A phone app must classify images in real time with low battery use. Explain why inference efficiency matters more than training speed on the phone, and where the training would usually happen instead.