Transformer Architecture Explained

Encoder, Decoder, Attention, and Feed-Forward Layers

Related Tools

Related Labs

Related Worksheets

Related Cheat Sheets

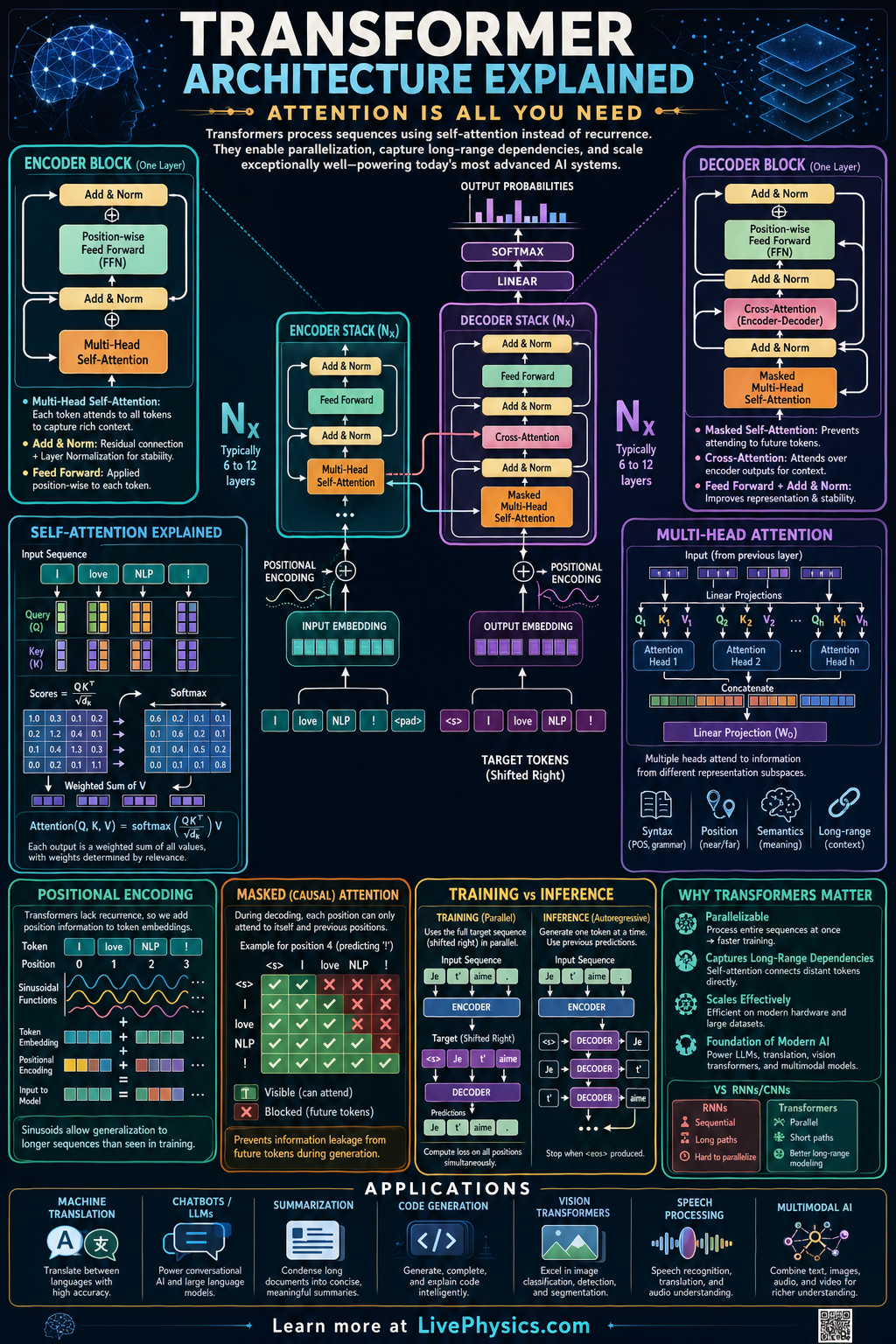

Transformers are a neural network architecture designed to process sequences such as text, code, and even image patches. They became famous because they handle long range relationships more effectively than many earlier sequence models like recurrent neural networks. The 2017 paper Attention Is All You Need showed that attention mechanisms alone could drive powerful language understanding and generation. Today, transformers power chatbots, translation systems, search tools, and many modern AI applications.

A transformer converts input tokens into vectors, adds positional information, and repeatedly refines those vectors through attention and feedforward layers. Self attention lets each token weigh the importance of other tokens in the sequence, which helps the model capture context. In encoder decoder versions, the encoder builds contextual representations and the decoder uses them to generate outputs one token at a time. Training adjusts millions or billions of parameters so the model assigns high probability to correct next tokens or target outputs.

Key Facts

- Token embeddings map discrete tokens to vectors: x_i in R^d_model

- Positional encoding adds order information: z_i = x_i + p_i

- Attention scores are computed by dot products: score(i,j) = q_i · k_j

- Scaled dot product attention: Attention(Q,K,V) = softmax(QK^T / sqrt(d_k))V

- Multi head attention runs several attention operations in parallel, then concatenates them: head_h = Attention(Q_h,K_h,V_h)

- Output probabilities are produced with a linear layer and softmax: P(token) = softmax(Wy + b)

Vocabulary

- Token

- A token is a basic unit of input such as a word, subword, or symbol that the model processes.

- Embedding

- An embedding is a learned vector representation that places similar tokens closer together in a high dimensional space.

- Self attention

- Self attention is a mechanism that lets each token compare itself with other tokens in the same sequence to gather context.

- Encoder

- The encoder is the part of a transformer that converts input tokens into contextualized internal representations.

- Decoder

- The decoder is the part of a transformer that uses previous outputs and encoder information to generate the next token.

Common Mistakes to Avoid

- Thinking attention is just selecting one important word, which is wrong because attention usually distributes weights across many tokens and combines their information.

- Ignoring positional encoding, which is wrong because attention alone does not inherently know token order in a sequence.

- Assuming the encoder and decoder are always both present, which is wrong because some transformers are encoder only or decoder only depending on the task.

- Forgetting the scaling factor sqrt(d_k) in attention, which is wrong because large dot products can make the softmax too sharp and hurt training stability.

Practice Questions

- 1 A model uses d_k = 64. In scaled dot product attention, by what number do you divide each entry of QK^T before applying softmax?

- 2 A sentence has 12 input tokens, and each token embedding has dimension d_model = 512. What is the shape of the embedding matrix for this single sentence before batching?

- 3 Explain why positional encoding is necessary in a transformer and what kind of information would be lost without it.