How Vector Databases Work

Embeddings, Approximate Nearest Neighbor Search, and Indexing

Related Tools

Related Labs

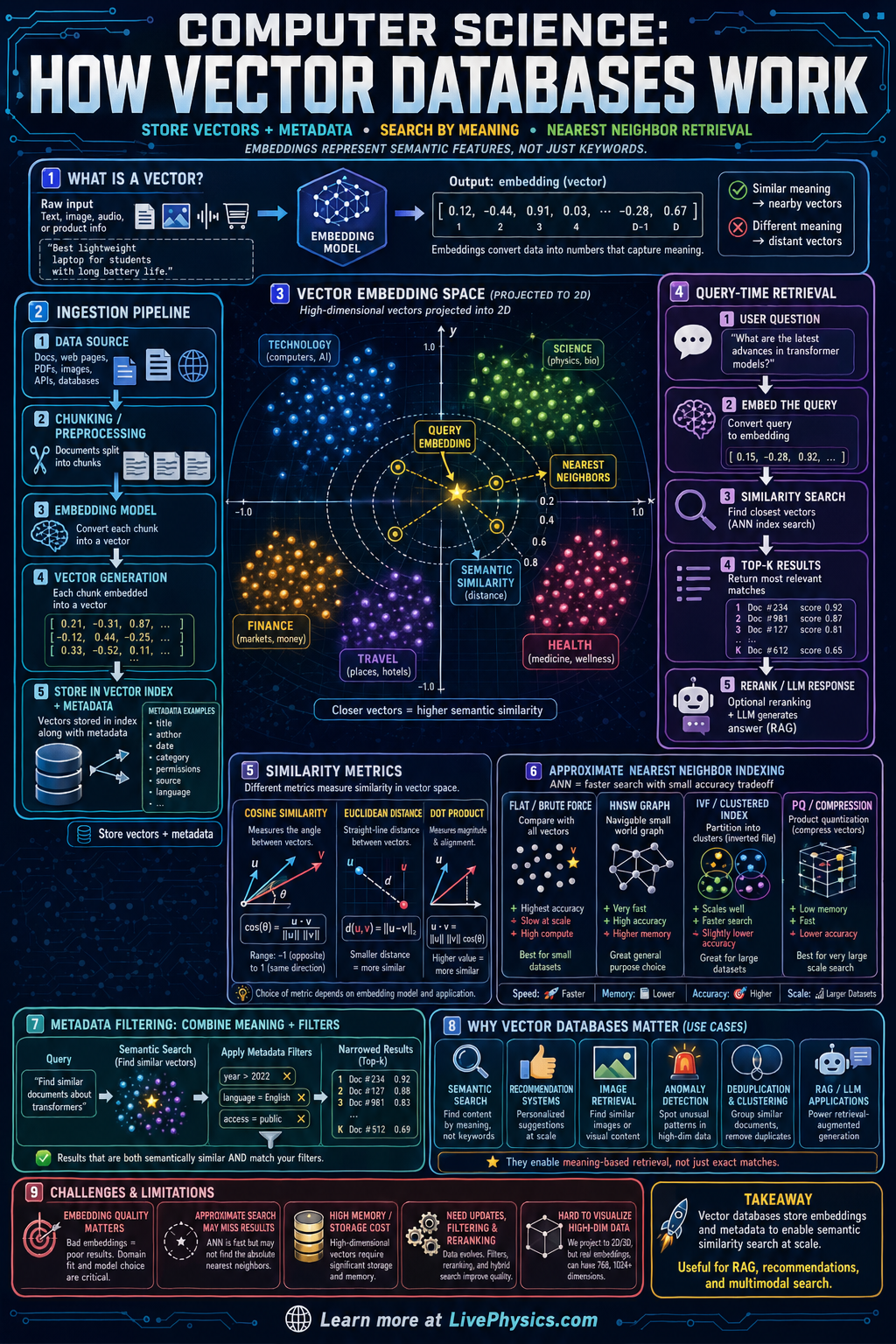

Vector databases are specialized systems that store data as numerical vectors called embeddings. These embeddings capture meaning, context, or similarity, so items with related ideas end up close together in vector space. This matters because many AI tasks need to find conceptually similar text, images, audio, or products rather than exact keyword matches. Vector databases make that kind of semantic search fast enough for real applications.

The basic workflow starts by converting each item into an embedding using a machine learning model. When a user submits a query, the system embeds the query too, then compares it to stored vectors using a similarity measure such as cosine similarity or Euclidean distance. Because checking every vector would be slow at large scale, vector databases use indexing methods like approximate nearest neighbor search to quickly find the closest matches. This supports applications such as retrieval-augmented generation, recommendation systems, image search, and anomaly detection.

Key Facts

- An embedding is a vector such as x = [x1, x2, ..., xn] that represents an item in n-dimensional space.

- Semantic similarity search returns items with nearby embeddings, not just items with matching words.

- Cosine similarity is cos(theta) = (A · B) / (||A|| ||B||). Larger values mean more similar directions.

- Euclidean distance is d(A,B) = sqrt(sum_i (Ai - Bi)^2). Smaller distance means vectors are closer.

- Nearest neighbor search finds the k closest vectors to a query vector q, often written as top-k NN(q).

- Approximate nearest neighbor methods trade a small amount of accuracy for much faster search on large datasets.

Vocabulary

- Embedding

- A list of numbers produced by a model that represents the meaning or features of an item.

- Vector space

- A mathematical space where each item is represented as a point with numerical coordinates.

- Similarity metric

- A rule such as cosine similarity or Euclidean distance used to measure how alike two vectors are.

- Nearest neighbor

- A stored vector that is among the closest matches to a query vector according to a similarity metric.

- Approximate nearest neighbor

- A fast search method that usually finds very close matches without checking every vector exactly.

Common Mistakes to Avoid

- Treating vector search like keyword search, which is wrong because embeddings compare meaning and context rather than exact word overlap.

- Using the wrong similarity metric, which is wrong because some embeddings are designed for cosine similarity while others work better with distance measures like Euclidean distance.

- Assuming 2D plots show the full embedding space, which is wrong because real embeddings often have hundreds or thousands of dimensions and the plot is only a projection.

- Believing exact search is always necessary, which is wrong because approximate nearest neighbor methods are usually much faster and still accurate enough for large AI systems.

Practice Questions

- 1 A text embedding model stores three document vectors: D1 = [1, 0], D2 = [0, 1], and D3 = [1, 1]. A query vector is q = [2, 1]. Using Euclidean distance, which document is the nearest neighbor?

- 2 Two vectors are A = [1, 2, 2] and B = [2, 1, 2]. Compute their cosine similarity using cos(theta) = (A · B) / (||A|| ||B||).

- 3 A user searches for "car repair" and the database returns a document about "automobile maintenance" even though it does not contain the word "car." Explain why a vector database can return this result while a basic keyword search might miss it.