What Is a Large Language Model (LLM)?

Tokens, Parameters, Training Data, and Emergent Capabilities

Related Tools

Related Labs

Related Worksheets

Related Cheat Sheets

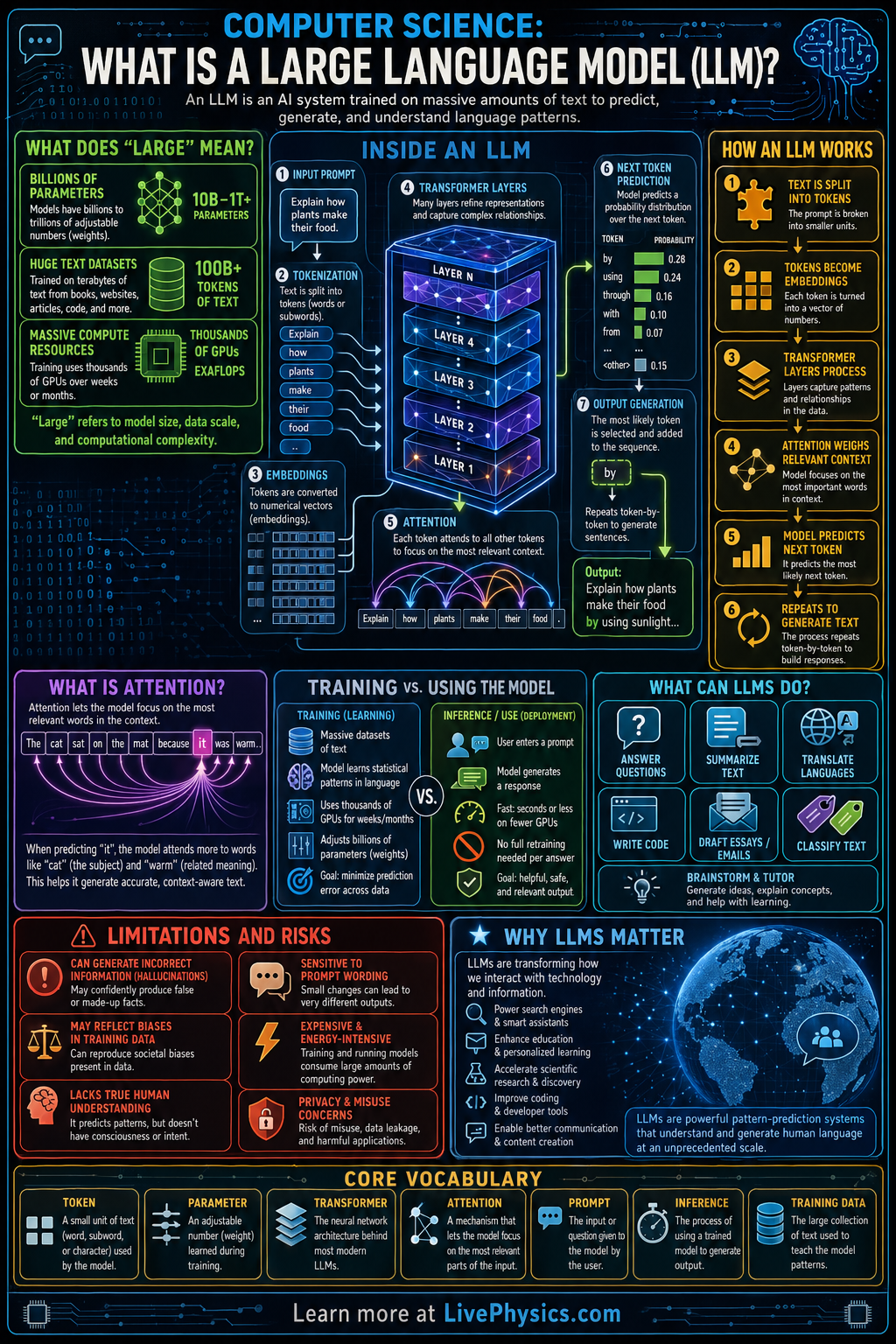

A large language model, or LLM, is a computer program trained to predict and generate human language. It learns patterns from massive collections of text, allowing it to answer questions, summarize information, translate languages, and write new content. LLMs matter because they turn language into something computers can process at scale, making many digital tools more useful and interactive. They are now used in chatbots, search tools, coding assistants, and educational software.

Most modern LLMs are built with transformer networks, which process text as tokens and learn relationships between words across long passages. During training, the model adjusts billions of parameters to reduce prediction error, often by guessing the next token in a sequence. Text is first converted into numerical vectors called embeddings, then layers of attention help the model decide which earlier tokens are most relevant. The result is not true understanding in a human sense, but a powerful statistical system that can generate fluent and context-sensitive language.

Key Facts

- LLM stands for Large Language Model, a neural network trained on very large text datasets.

- Text is split into tokens, which may be whole words, parts of words, or punctuation marks.

- A common training objective is next-token prediction: P(token_t | token_1, token_2, ..., token_t-1).

- Attention scores are often computed with scaled dot product attention: Attention(Q, K, V) = softmax(QK^T / sqrt(d_k))V.

- Embeddings map tokens to vectors, so each token becomes a numerical representation in high-dimensional space.

- Model quality often improves with more parameters, more data, and more compute, but this also raises cost and energy use.

Vocabulary

- Token

- A token is a small unit of text, such as a word, part of a word, or punctuation, that the model processes.

- Transformer

- A transformer is a neural network architecture that uses attention to process relationships between tokens in a sequence.

- Parameter

- A parameter is a learned numerical value inside the model that changes during training and affects its output.

- Embedding

- An embedding is a vector of numbers that represents the meaning or usage pattern of a token.

- Attention

- Attention is a mechanism that lets the model weigh which earlier tokens are most important for predicting the next one.

Common Mistakes to Avoid

- Thinking an LLM stores exact answers for every question, which is wrong because it mainly learns statistical patterns and generates outputs from those patterns.

- Assuming fluent text means true understanding, which is wrong because the model can produce convincing language without human-like reasoning or awareness.

- Ignoring tokenization when estimating input size, which is wrong because models process tokens rather than plain words and token counts affect limits and cost.

- Believing larger models are always correct, which is wrong because even very large LLMs can hallucinate facts, reflect bias, or fail on simple reasoning tasks.

Practice Questions

- 1 A short sentence is split into 18 tokens. If a model can handle 256 tokens in its context window, how many more tokens can still be added before reaching the limit?

- 2 A training run processes 12,000 sequences, and each sequence contains 128 tokens. How many total tokens are processed?

- 3 Why can an LLM generate a confident but incorrect answer even when its grammar and style sound highly accurate?