Zero-Shot vs Few-Shot Prompting

In-Context Examples and When Each Approach Works Best

Related Tools

Related Labs

Related Worksheets

Related Cheat Sheets

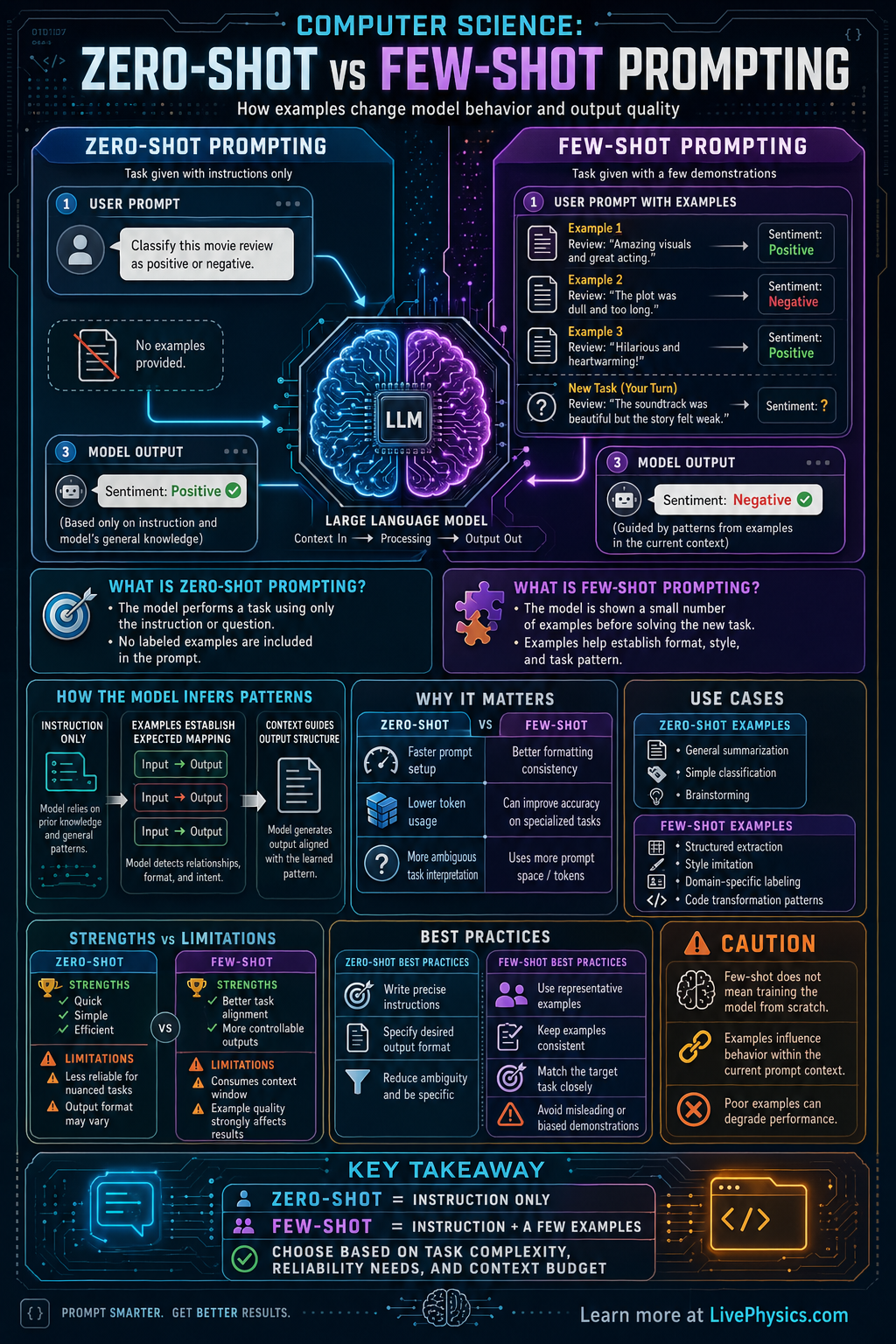

Zero-shot and few-shot prompting are two common ways to interact with large language models. In zero-shot prompting, you give the model only the task or instruction. In few-shot prompting, you give the task plus a small number of examples that show the pattern you want. This matters because the examples can strongly influence accuracy, format, tone, and consistency.

The difference comes from how the model uses context. A zero-shot prompt relies on the model's general training to infer what you mean from the instruction alone. A few-shot prompt adds short demonstrations, which help the model identify the desired structure or reasoning style. In practice, few-shot prompting often improves performance on specialized, ambiguous, or formatting-sensitive tasks, but it also uses more context space and can introduce bias from the chosen examples.

Key Facts

- Zero-shot prompting: instruction + task, with 0 worked examples.

- Few-shot prompting: instruction + k examples + new task, where k is a small integer such as 1 to 5.

- Prompt structure for few-shot often looks like Input1 -> Output1, Input2 -> Output2, ..., Inputn -> Outputn, New Input -> ?

- Performance depends on context, so total prompt length = instruction length + example length + user query length.

- Few-shot prompting can improve consistency when the task requires a specific format, label set, or style.

- Poor examples can hurt results because model output often follows the pattern shown in the examples.

Vocabulary

- Zero-shot prompting

- A prompting method where the model receives only the instruction or task and no examples.

- Few-shot prompting

- A prompting method where the model sees a small number of examples before solving a new task.

- Context window

- The total amount of text the model can consider at one time when generating a response.

- Prompt

- The text input given to a model, including instructions, examples, and the user's task.

- Generalization

- The ability of a model to apply learned patterns to new inputs it has not seen before.

Common Mistakes to Avoid

- Using zero-shot for a highly specific formatting task, then expecting perfect structure. This is wrong because the model may understand the topic but not the exact output pattern you want.

- Giving too many long examples in a few-shot prompt, then wondering why the response becomes slower or less focused. This is wrong because examples consume context space and can distract from the final task.

- Choosing inconsistent few-shot examples, such as mixed labels or different answer styles. This is wrong because the model often imitates the inconsistency shown in the prompt.

- Assuming few-shot is always better than zero-shot. This is wrong because simple tasks may work well with zero-shot, while weak or biased examples can reduce quality.

Practice Questions

- 1 A teacher wants an AI to classify student comments as Positive or Negative. With zero-shot, the prompt contains only the instruction and one new comment. With few-shot, the prompt contains the instruction, 3 labeled examples, and one new comment. How many worked examples are provided in each method?

- 2 A model can handle 1200 words of context. A zero-shot prompt uses 150 words of instruction and 50 words for the user task. A few-shot version uses the same instruction and task plus 4 examples of 120 words each. How many words does each prompt use, and how many words of context remain in each case?

- 3 A user asks for answers in a strict JSON format. Explain why few-shot prompting may outperform zero-shot prompting for this task, and describe one situation where zero-shot would still be a reasonable choice.