Statistical Power

Detecting Real Effects in Your Study

Related Tools

Related Labs

Related Worksheets

Related Cheat Sheets

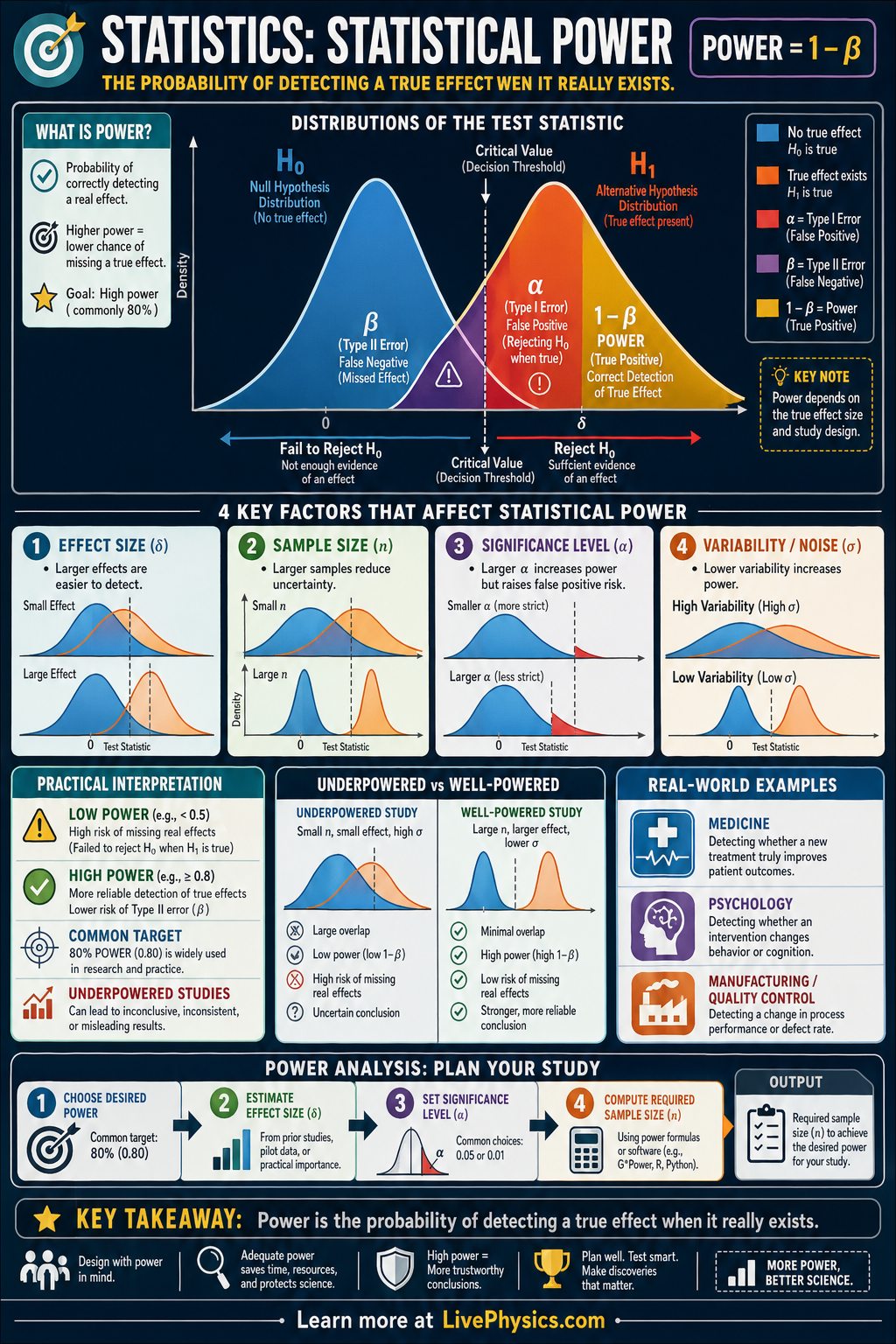

Statistical power is the probability that a study will correctly detect a real effect when that effect truly exists. It matters because a low-power study can miss important findings, leading researchers to conclude that nothing is happening when there actually is a meaningful difference. Power helps scientists design experiments that are sensitive enough to answer their research questions. It is a core idea in hypothesis testing, especially when planning sample size.

Power is closely tied to Type II error, denoted by β, which is the chance of failing to reject the null hypothesis when the alternative hypothesis is true. The relationship is Power = 1 - β, so increasing power means reducing the chance of missing a real effect. Power depends on several factors, including effect size, sample size, variability, and the significance level α. In distribution diagrams, power is shown as the area under the alternative distribution beyond the critical threshold.

Key Facts

- Statistical power is the probability of rejecting when is true.

- Power = 1 - β

- Type I error rate:

- Type II error rate:

- Larger sample size n usually increases power by reducing standard error.

- Larger effect size and lower variability both increase the separation between distributions and raise power.

Vocabulary

- Statistical power

- The probability that a test correctly detects a real effect when the effect truly exists.

- Type I error

- A Type I error happens when the null hypothesis is rejected even though it is actually true.

- Type II error

- A Type II error happens when the null hypothesis is not rejected even though the alternative hypothesis is true.

- Effect size

- Effect size measures how large the true difference or relationship is in the population.

- Critical threshold

- The critical threshold is the cutoff value that separates the reject region from the fail-to-reject region in a hypothesis test.

Common Mistakes to Avoid

- Confusing power with the significance level α, which is wrong because α is the chance of a false positive while power is the chance of detecting a real effect.

- Assuming a non-significant result proves there is no effect, which is wrong because a low-power study may simply fail to detect an effect that exists.

- Thinking power is fixed for every study, which is wrong because power changes with sample size, variability, effect size, and the chosen α level.

- Believing that increasing α has no tradeoff, which is wrong because a larger α can increase power but also raises the chance of a Type I error.

Practice Questions

- 1 A hypothesis test has β = 0.18. What is the statistical power of the test?

- 2 A researcher increases the sample size from 25 to 100 while keeping the effect size and α the same. In general, does power increase or decrease, and why?

- 3 Two studies test the same effect with the same α, but Study A has much more variability in the data than Study B. Which study is likely to have lower power, and what is the reason?