Type I and Type II Errors

False Positives and False Negatives

Related Tools

Related Labs

Related Worksheets

Related Cheat Sheets

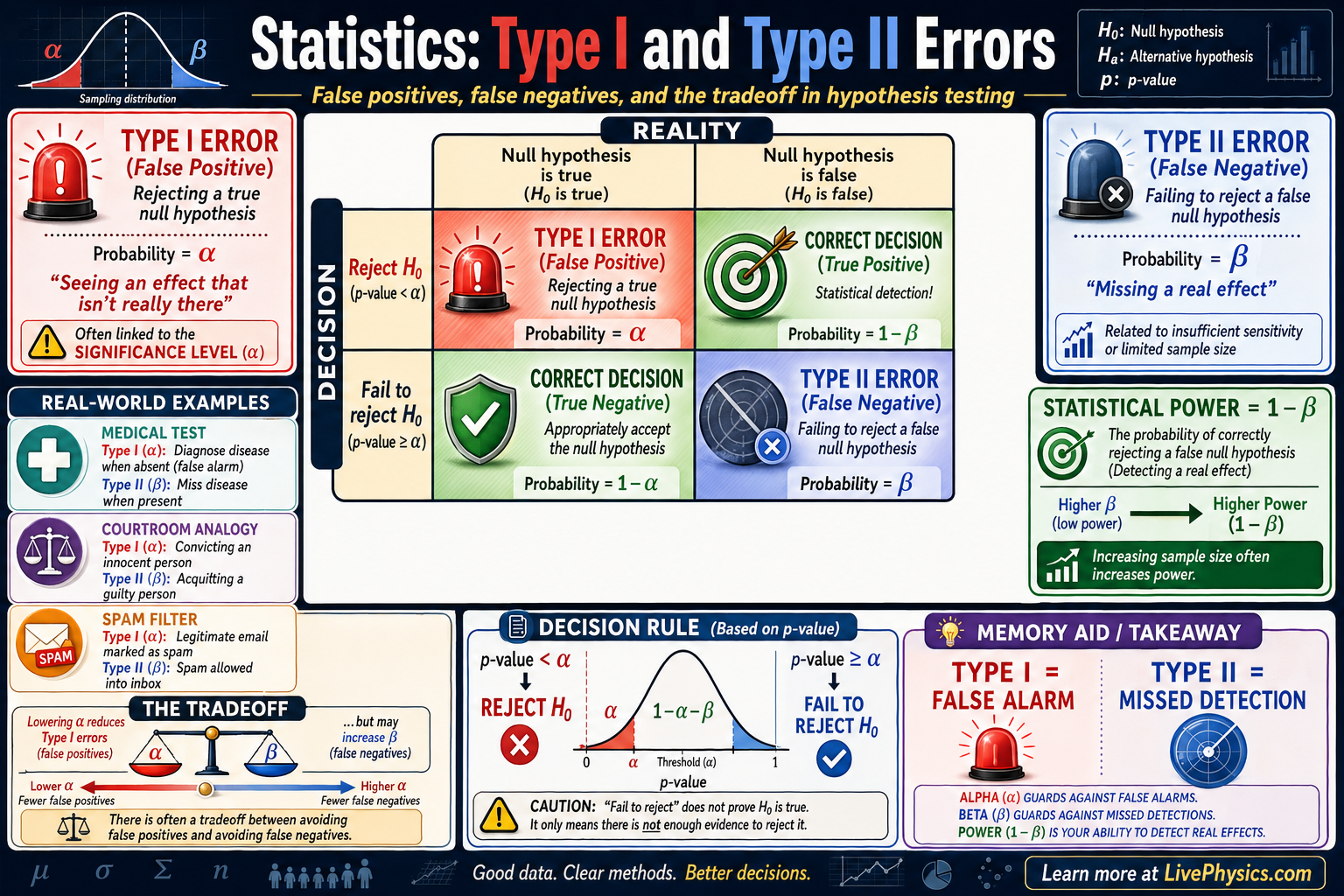

In hypothesis testing, statisticians make decisions using sample data, but those decisions can be wrong. Type I and Type II errors describe the two main ways a test can fail when deciding whether to reject a null hypothesis. These ideas matter because every real test, from medical screening to quality control, balances the risk of false alarms against the risk of missed effects. Understanding both errors helps students interpret test results more carefully.

A Type I error happens when the null hypothesis is actually true, but the test rejects it anyway. A Type II error happens when the null hypothesis is actually false, but the test fails to reject it. The probability of a Type I error is called , and the probability of a Type II error is called . Test power is , so reducing missed detections usually means increasing power through better design, larger samples, or stronger effects.

Key Facts

- Type I error: reject when is true.

- Type II error: fail to reject when is false.

- P(Type I error) = .

- P(Type II error) = .

- Power = .

- Lowering usually makes rejecting harder and can increase if sample size stays fixed.

Vocabulary

- Null hypothesis

- The default claim, usually written as , that says there is no effect, no difference, or no change.

- Alternative hypothesis

- The competing claim, usually written as or , that says an effect, difference, or change exists.

- Significance level

- The chosen cutoff that sets the maximum tolerated probability of a Type I error.

- Type I error

- A false positive in which the test rejects the null hypothesis even though it is actually true.

- Type II error

- A false negative in which the test does not reject the null hypothesis even though it is actually false.

Common Mistakes to Avoid

- Saying a Type I error means the null hypothesis is false, because a Type I error actually happens when the null hypothesis is true but gets rejected anyway.

- Thinking failing to reject proves is true, because the test may simply lack enough evidence or power to detect a real effect.

- Confusing with , because is the probability of a false positive while is the probability of a false negative.

- Assuming lowering improves everything, because making rejection harder can increase and reduce power when sample size does not change.

Practice Questions

- 1 A medical test uses . What is the probability of a Type I error, and what does that mean in words?

- 2 A hypothesis test has . Calculate the power of the test.

- 3 A researcher lowers from to without increasing sample size. Explain how this change is likely to affect the chances of Type I and Type II errors.