Diffusion Models vs LLMs

Image Generation vs Text Generation - Architecture Differences

Related Tools

Related Labs

Related Worksheets

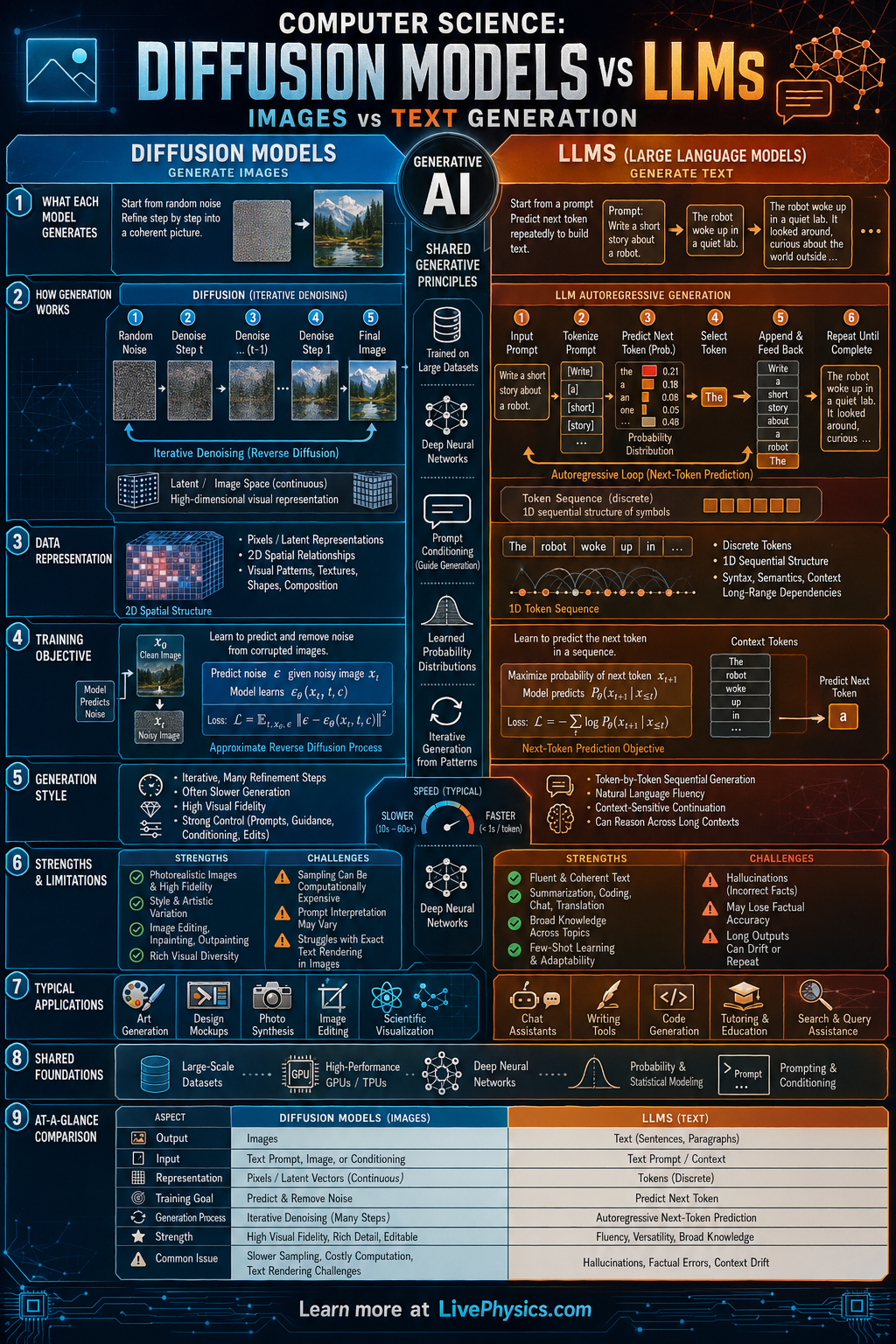

Generative AI includes systems that create new content such as images, text, audio, and code. Two of the most important families are diffusion models and large language models, or LLMs. Diffusion models are best known for image generation, while LLMs are best known for text generation. Comparing them helps students see how different algorithms can solve similar creative tasks using different data structures and training goals.

A diffusion model learns how to remove noise from data step by step until a clear image appears. An LLM learns the probability of the next token in a sequence, allowing it to generate sentences one piece at a time. Both models are trained on huge datasets and use neural networks, but they represent and generate information differently. Understanding that difference explains why images are often generated iteratively while text is usually generated autoregressively.

Key Facts

- Diffusion training often adds noise forward and learns the reverse process: x_t = sqrt(alpha_t) x_0 + sqrt(1 - alpha_t) epsilon

- LLMs model token probabilities with next token prediction: P(x_1, x_2, ..., x_n) = product of P(x_t | x_<t)

- Image generation in diffusion models is iterative, often requiring many denoising steps from random noise to final image.

- Text generation in LLMs is autoregressive, producing one token at a time based on previous tokens.

- Transformer attention is central to LLMs: Attention(Q,K,V) = softmax(QK^T / sqrt(d_k))V

- A common LLM training loss is cross entropy: L = -sum y log(p)

Vocabulary

- Diffusion model

- A generative model that learns to create data by reversing a gradual noising process.

- Large language model

- A neural network trained on large text datasets to predict and generate sequences of tokens.

- Token

- A small unit of text, such as a word, part of a word, or symbol, used as input and output by an LLM.

- Autoregressive generation

- A generation method where the model produces the next output element using previously generated elements.

- Denoising

- The process of removing random noise from corrupted data to recover a meaningful signal.

Common Mistakes to Avoid

- Assuming diffusion models and LLMs work the same way, which is wrong because diffusion models usually refine noisy continuous data while LLMs predict discrete tokens in sequence.

- Thinking an LLM generates an entire paragraph at once, which is wrong because it typically generates one token at a time and updates its context after each step.

- Believing diffusion models directly memorize and paste training images, which is wrong because they learn statistical patterns for reversing noise, though memorization can still be a separate concern.

- Ignoring data type differences, which is wrong because images are usually represented as pixel arrays or latent tensors while text is represented as token sequences.

Practice Questions

- 1 An LLM assigns probabilities 0.5, 0.3, and 0.2 to three possible next tokens. If the correct token has probability 0.3, what is the cross entropy loss for that token using L = -log(p)? Give your answer in natural log form and as a decimal approximation.

- 2 A diffusion system uses 50 denoising steps to generate one image, and each step takes 0.08 s. How long does one image take to generate, and how long would 12 images take if generated one after another?

- 3 A student says that image generation and text generation are identical because both use neural networks. Explain one important difference in how diffusion models and LLMs generate outputs.