Encoder vs Decoder Models

BERT vs GPT - Bidirectional vs Autoregressive

Related Tools

Related Labs

Related Worksheets

Related Cheat Sheets

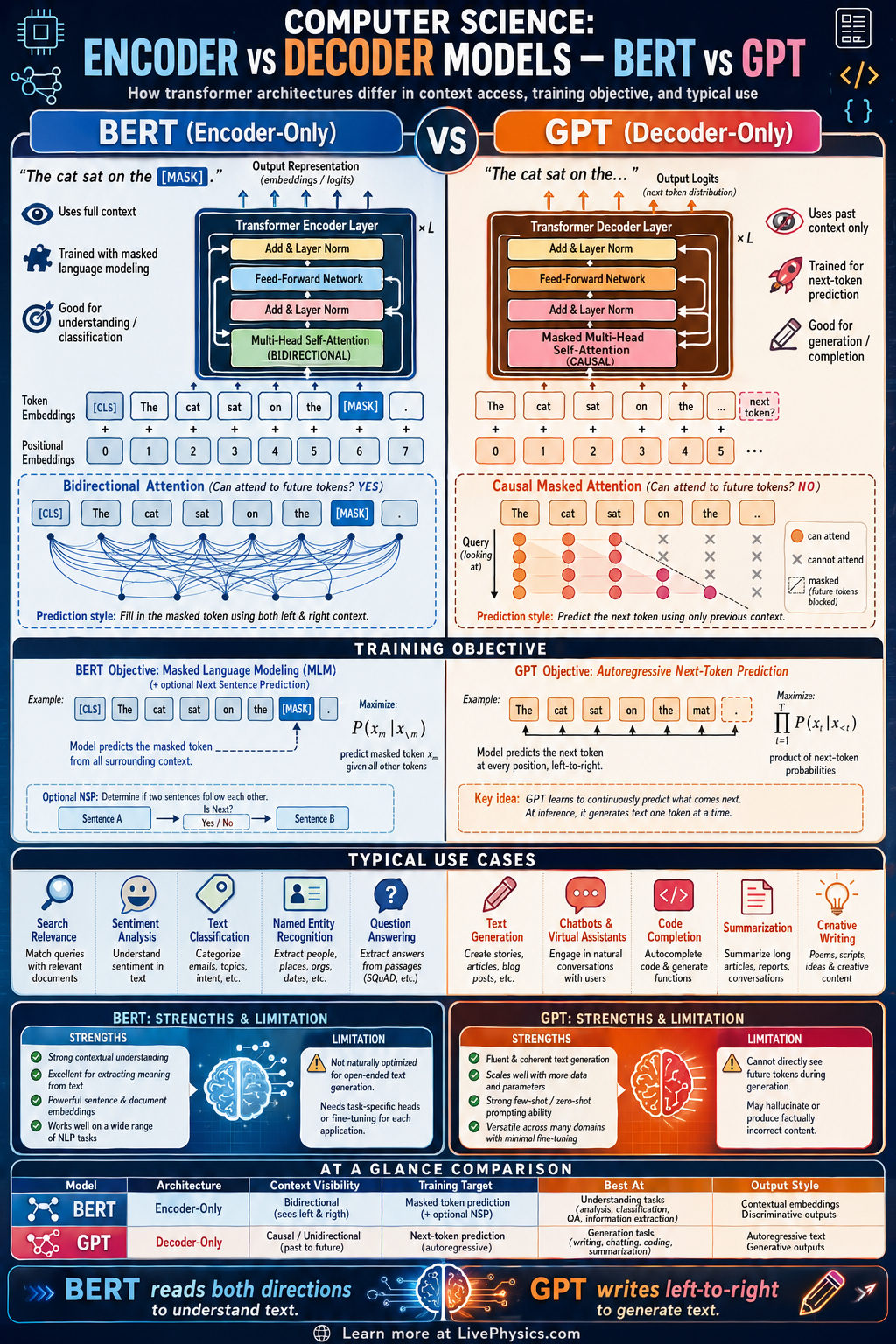

Transformer models power many modern language systems, but not all transformers process text in the same way. Encoder-only models like BERT and decoder-only models like GPT are built for different goals, even though both use attention mechanisms. Understanding the difference helps students explain why some models are better at classification and others are better at text generation. This comparison is central to natural language processing, search, chatbots, and AI-assisted writing.

BERT reads input with bidirectional context, so each word can attend to words on both sides during encoding. GPT generates text autoregressively, so each new token is predicted from earlier tokens only using masked self-attention. These architectural choices lead to different training objectives, strengths, and limitations. In practice, BERT is often used for understanding tasks, while GPT is often used for generation tasks.

Key Facts

- BERT is an encoder-only transformer that uses bidirectional self-attention over the input sequence.

- GPT is a decoder-only transformer that uses causal masked self-attention so token i can attend only to tokens 1 through i.

- Language modeling in GPT is autoregressive: P(x1, x2, ..., xn) = product from t=1 to n of P(xt | x1, ..., x(t-1)).

- BERT is commonly trained with masked language modeling, where some input tokens are hidden and predicted from context.

- Self-attention uses weighted similarity: Attention(Q, K, V) = softmax(QK^T / sqrt(d_k))V.

- BERT is strong for classification, tagging, and sentence understanding, while GPT is strong for open-ended generation, completion, and dialogue.

Vocabulary

- Encoder

- A transformer component that converts an input sequence into context-rich representations for each token.

- Decoder

- A transformer component that predicts output tokens step by step using previously seen tokens.

- Bidirectional context

- Information from both earlier and later words in a sequence used to represent a token.

- Causal mask

- A rule in attention that blocks a token from seeing future tokens during prediction.

- Autoregressive generation

- A process where a model generates one token at a time based on the tokens already produced.

Common Mistakes to Avoid

- Assuming BERT and GPT are the same because both are transformers, which is wrong because their attention masks and training goals are different. Architecture family does not mean identical behavior.

- Thinking BERT naturally generates long fluent text like GPT, which is wrong because BERT is mainly designed for encoding and understanding complete input context. It is not optimized for left-to-right text generation.

- Forgetting that GPT cannot look at future tokens during standard generation, which is wrong because causal masking prevents access to later positions. This restriction is what makes next-token prediction valid.

- Saying BERT always outperforms GPT on every language task, which is wrong because task type matters. Understanding tasks often favor encoder models, while generation tasks often favor decoder models.

Practice Questions

- 1 A GPT-style model is given the token sequence: The cat sat on the mat. When predicting the token sat, which earlier tokens may it attend to under causal masking, and how many tokens are available if sat is the third token?

- 2 In masked language modeling, the sentence is: Water boils at [MASK] degrees Celsius. If a BERT-style encoder uses both left and right context, explain which surrounding words help predict the masked token and identify the most likely number.

- 3 A company needs one model for sentiment classification of product reviews and another for writing product descriptions from short prompts. Which model type, encoder-only or decoder-only, is the better fit for each task, and why?