Multi-Head Attention Visualized

Parallel Attention Heads, Projection, and Why Multiple Heads Help

Related Tools

Related Labs

Related Worksheets

Related Cheat Sheets

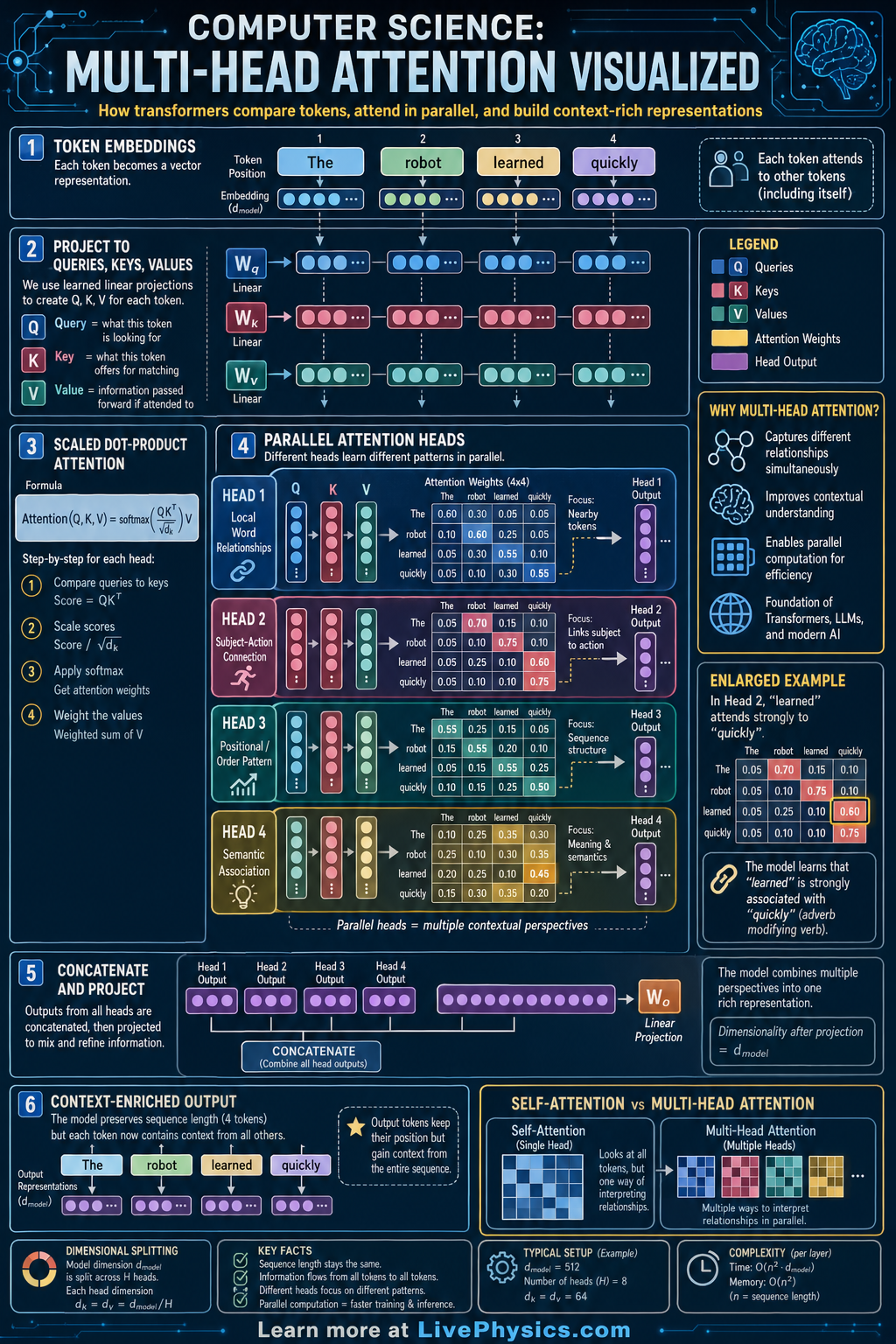

Multi-head attention is a core idea behind transformer models used in language processing, vision, and many other AI systems. It helps a model decide which parts of an input sequence matter most when processing each token. Instead of looking at the sequence in only one way, the model uses several attention heads in parallel. This makes it better at capturing different relationships such as nearby context, long-range dependencies, and grammatical structure.

The mechanism starts by turning each token embedding into three learned vectors called queries, keys, and values. Each head computes attention scores with score = QK^T / sqrt(d_k), converts them to weights with softmax, and uses those weights to combine the value vectors. The outputs from all heads are concatenated and passed through another learned projection. Because different heads can focus on different patterns, the model builds a richer representation than a single attention operation alone.

Key Facts

- Each attention head uses learned projections: Q = XW_Q, K = XW_K, V = XW_V

- Scaled dot-product attention is Attention(Q, K, V) = softmax(QK^T / sqrt(d_k))V

- The factor 1 / sqrt(d_k) keeps large dot products from making softmax too extreme

- Multi-head attention is MultiHead(Q, K, V) = Concat(head_1, ..., head_h)W_O

- For head i, head_i = Attention(QW_i^Q, KW_i^K, VW_i^V)

- Attention weights for one query sum to 1 after softmax, so they act like a distribution over tokens

Vocabulary

- Token embedding

- A numerical vector that represents a token such as a word or subword in a form a model can process.

- Query

- A learned vector that represents what information a token is looking for from other tokens.

- Key

- A learned vector that represents what kind of information a token offers to other tokens.

- Value

- A learned vector containing the information that gets combined using the attention weights.

- Attention head

- One parallel attention computation that learns to focus on a particular type of relationship in the input.

Common Mistakes to Avoid

- Thinking each head sees different input tokens, which is wrong because all heads usually receive the same input embeddings and differ by their learned projection matrices.

- Forgetting the scaling factor sqrt(d_k), which is wrong because unscaled dot products can become too large and make softmax produce unstable or overly sharp weights.

- Assuming attention weights are applied to keys, which is wrong because the weights are computed from queries and keys but are used to combine the value vectors.

- Believing multi-head attention is just repeated identical attention, which is wrong because each head has different learned parameters and can capture different patterns.

Practice Questions

- 1 A head has d_k = 64. If the raw dot product Q · K = 16 for one query-key pair, what scaled score is sent into softmax?

- 2 A query attends to three tokens with scaled scores [1, 0, -1]. Using e approximately [2.72, 1.00, 0.37], compute the softmax attention weights to two decimal places.

- 3 Explain why using several attention heads can represent sentence structure better than using only one head.