Multimodal AI Explained

Text, Image, Audio - How AI Handles Multiple Data Types

Related Tools

Related Labs

Related Worksheets

Related Cheat Sheets

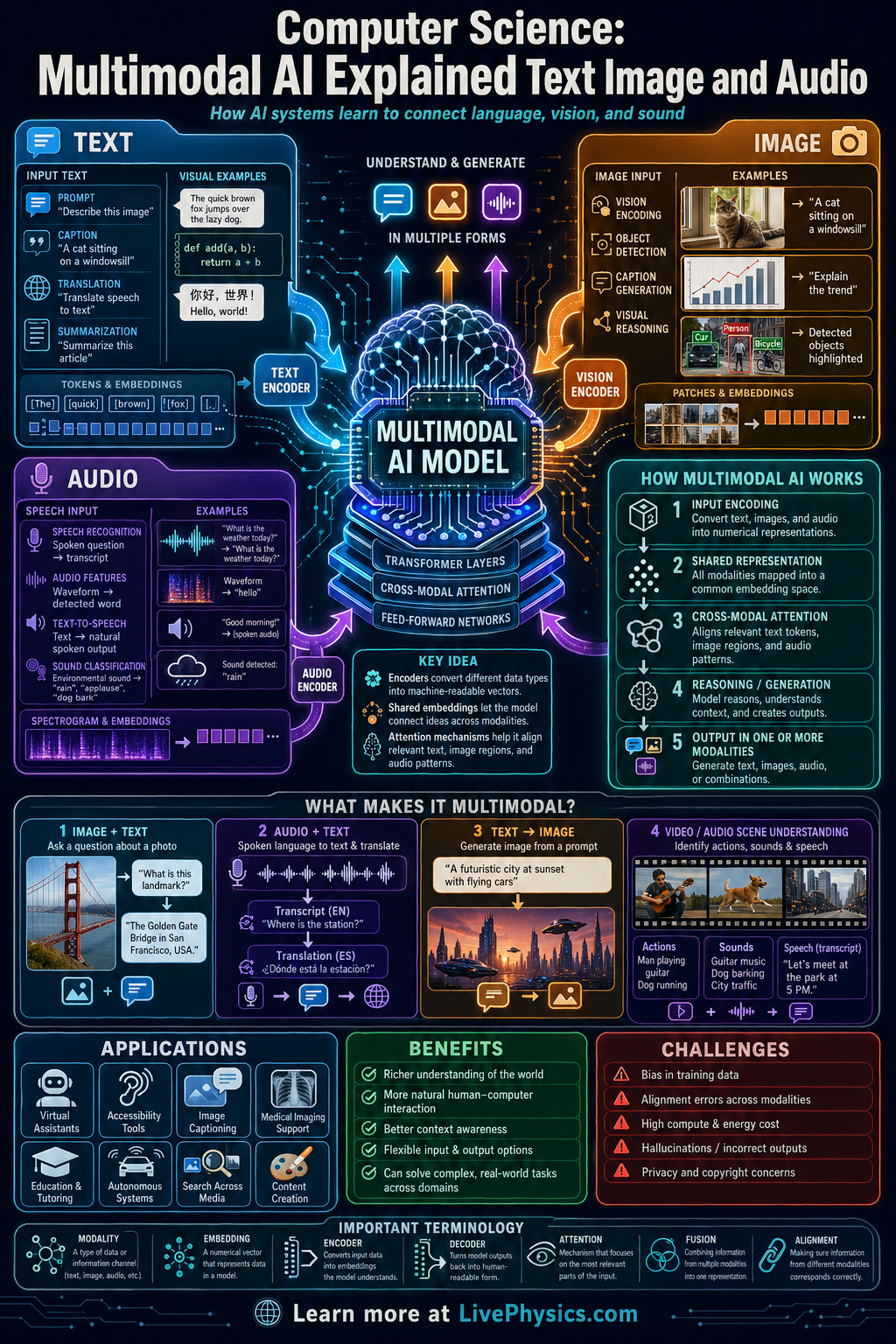

Multimodal AI is a type of artificial intelligence that can work with more than one kind of data, such as text, images, and audio. Instead of treating each format separately, it learns patterns that connect them. This matters because real-world information is naturally mixed across words, pictures, and sounds. Systems that combine these sources can often understand tasks more accurately and respond more usefully.

A multimodal model usually converts each input type into numerical representations called embeddings, then compares or combines them inside a shared model. For example, it can match a caption to a photo, answer questions about an image, or generate speech from text. Training often uses very large datasets containing paired examples like images with labels or audio with transcripts. The result is a system that can translate information across formats and reason using several signals at once.

Key Facts

- Multimodal AI processes two or more data types, such as text + image or text + audio.

- A model often maps inputs into vectors called embeddings, where similarity can be measured by d = sqrt(sum((x_i - y_i)^2)).

- Neural network output probabilities commonly use softmax: P(i) = e^(z_i) / sum(e^(z_j)).

- Training usually minimizes error with a loss function, often written as Loss = predicted - target measure combined over many examples.

- Attention helps the model connect parts of different inputs, with a common form Attention(Q,K,V) = softmax(QK^T / sqrt(d_k))V.

- A multimodal system can perform tasks like image captioning, visual question answering, speech recognition, and text to image generation.

Vocabulary

- Multimodal AI

- An AI system that can process and connect more than one type of data, such as text, images, and audio.

- Embedding

- A numerical vector that represents the meaning or features of data in a form a model can compare and learn from.

- Attention

- A mechanism that lets a model focus on the most relevant parts of its input when making a prediction.

- Training data

- The collection of examples used to teach a model patterns, relationships, and correct outputs.

- Fusion

- The process of combining information from different data types into one shared model or decision.

Common Mistakes to Avoid

- Assuming multimodal AI just stores separate text, image, and audio models side by side, which is wrong because useful systems must connect information across formats through shared representations or fusion steps.

- Thinking more input types always guarantee better answers, which is wrong because noisy or mismatched data can confuse the model and reduce accuracy.

- Treating embeddings as ordinary labels, which is wrong because embeddings are numerical feature vectors that capture relationships and distances between examples.

- Ignoring alignment between modalities, which is wrong because text, images, and audio must correspond correctly during training or the model learns false associations.

Practice Questions

- 1 A dataset has 1200 examples. Of these, 55% contain text and image together, 25% contain text and audio together, and the rest contain all three modalities. How many examples contain all three modalities?

- 2 A classifier gives logits z = [2, 1, 0]. Using softmax, calculate the approximate probability of the first class to two decimal places.

- 3 Explain why a model that answers questions about a photograph may perform better when it uses both the image and the question text instead of only one of them.