Positional Encoding Explained

How Transformers Know Word Order Without Recurrence

Related Tools

Related Labs

Related Worksheets

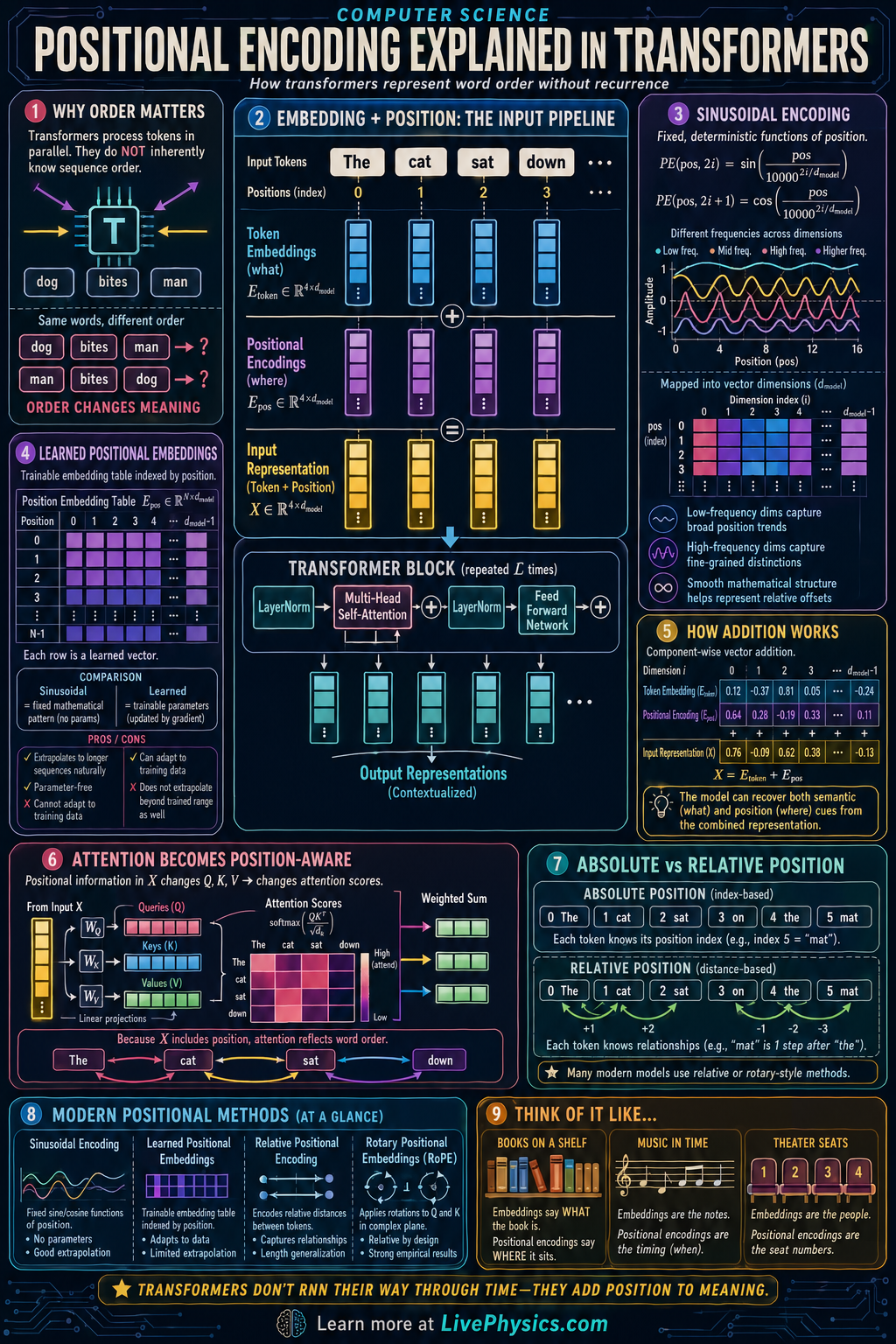

Transformers process all tokens in a sequence at the same time, which makes them fast and powerful for language, vision, and many other tasks. But this parallel processing creates a problem: the model does not automatically know the order of the tokens. Positional encoding solves this by adding information about each token's position in the sequence. Without it, a transformer would struggle to tell the difference between sentences with the same words in different orders.

In practice, each token first becomes a vector called an embedding, and then a positional vector is combined with it before the data enters the attention layers. The combined representation lets attention use both meaning and location when comparing tokens. Some transformers use fixed sinusoidal encodings, while others learn position vectors during training. More advanced models may use relative position methods so the model focuses on distances between tokens instead of only absolute index values.

Key Facts

- Transformers need position information because self attention alone is permutation invariant.

- Input to the model is often x_i = e_i + p_i, where e_i is the token embedding and p_i is the positional encoding for position i.

- A common fixed encoding uses PE(pos, 2i) = sin(pos / 10000^(2i/d_model)).

- The paired odd dimension is PE(pos, 2i+1) = cos(pos / 10000^(2i/d_model)).

- In self attention, scores are computed by Attention(Q, K, V) = softmax(QK^T / sqrt(d_k))V.

- Relative position methods encode token distance, which can help generalization to longer sequences.

Vocabulary

- Token embedding

- A token embedding is a vector that represents the meaning or identity of a token in a continuous numerical space.

- Positional encoding

- Positional encoding is a vector added to or combined with a token embedding so the model knows where the token appears in the sequence.

- Self attention

- Self attention is a mechanism that lets each token compare itself with other tokens in the same sequence to gather useful context.

- Absolute position

- Absolute position is the exact index of a token in the sequence, such as first, second, or third.

- Relative position

- Relative position describes how far apart two tokens are, such as one step away or three steps away.

Common Mistakes to Avoid

- Assuming token embeddings already contain word order, which is wrong because embeddings mainly represent token identity or meaning and not sequence position by themselves.

- Treating positional encoding as optional in a basic transformer, which is wrong because without position information the model cannot reliably distinguish reordered sequences.

- Thinking sinusoidal encodings are random patterns, which is wrong because the sine and cosine functions create structured position signals that vary smoothly across dimensions.

- Confusing absolute and relative position methods, which is wrong because absolute methods encode each token's index while relative methods encode distances between tokens.

Practice Questions

- 1 A transformer uses d_model = 8. For position pos = 0, compute PE(0, 0) and PE(0, 1) using PE(pos, 2i) = sin(pos / 10000^(2i/d_model)) and PE(pos, 2i+1) = cos(pos / 10000^(2i/d_model)).

- 2 A token embedding is e = [1, 0, 2, -1] and its positional encoding is p = [0.1, 0.2, -0.1, 0.3]. Find the combined input vector x = e + p.

- 3 Explain why a transformer without positional encoding would have trouble telling the difference between the sequences ["dog bites man"] and ["man bites dog"] even if it sees the same three tokens.