Embedding and Retrieval Pipeline

End-to-End: Chunking, Embedding, Indexing, and Retrieval

Related Labs

Related Worksheets

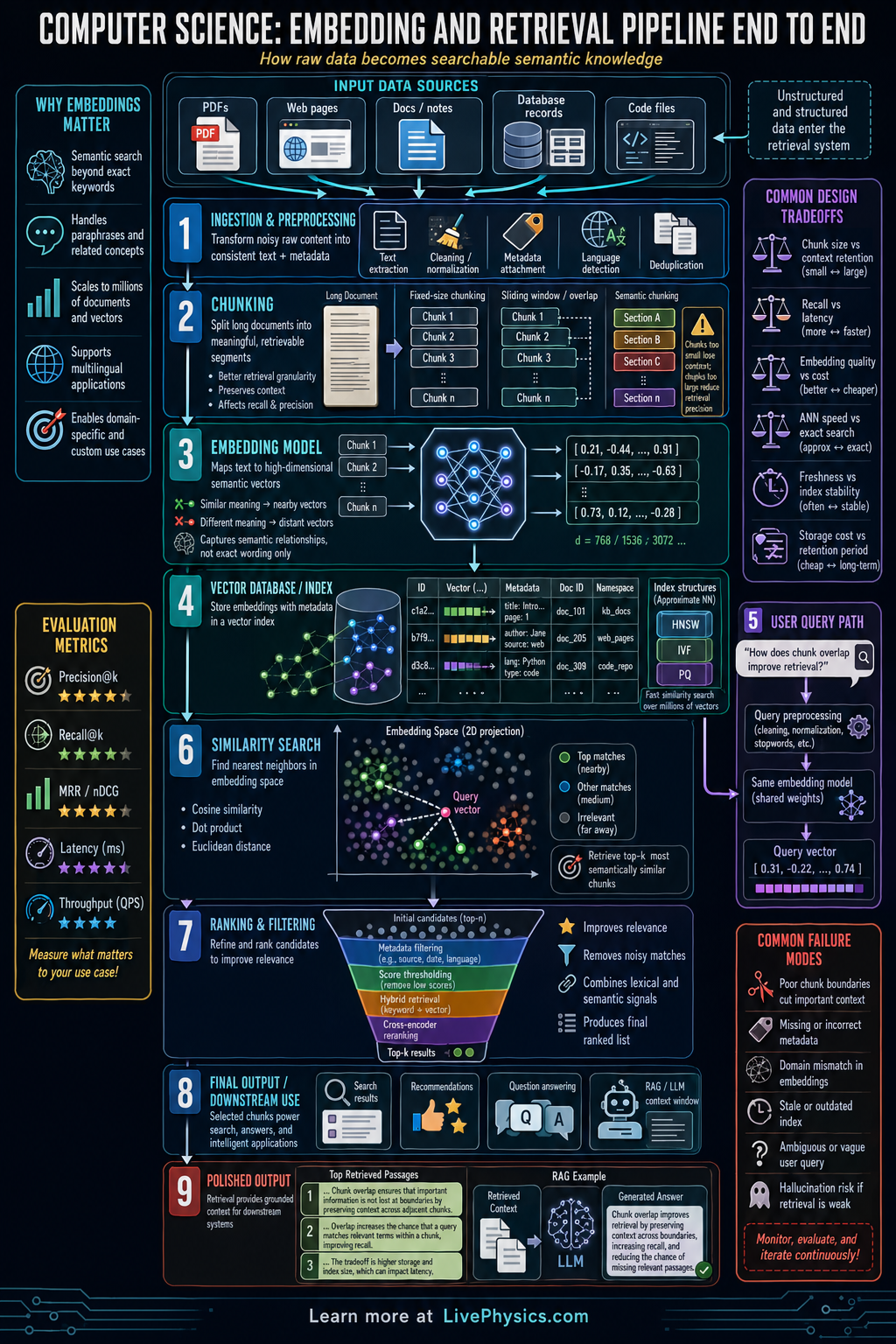

An embedding and retrieval pipeline turns messy information into a form that computers can search efficiently. It is used in semantic search, recommendation systems, question answering, and retrieval augmented generation. Instead of matching only exact keywords, the system represents meaning with vectors so related ideas can be found even when wording differs. This matters because modern applications often need fast, relevant access to large collections of text, images, or other data.

The pipeline usually starts with raw documents, then cleans and splits them into chunks before converting each chunk into an embedding vector. Those vectors are stored in an index that supports similarity search, often using cosine similarity or dot product to compare a query with stored items. At retrieval time, a user query is embedded with the same model, matched against the index, and the top results are ranked or filtered before being returned. Good performance depends on careful choices about chunk size, embedding model, indexing method, and evaluation metrics such as recall and precision.

Key Facts

- An embedding maps an item to a vector x in R^n, where similar meanings should have nearby vectors.

- Cosine similarity is cos(theta) = (a · b) / (||a|| ||b||).

- Euclidean distance is d(a,b) = sqrt(sum_i (a_i - b_i)^2).

- Dot product similarity is a · b = sum_i a_i b_i.

- A common retrieval step is top-k search, which returns the k items with highest similarity scores.

- Precision = relevant retrieved / total retrieved, and Recall = relevant retrieved / total relevant.

Vocabulary

- Embedding

- A numerical vector that represents the meaning or features of data such as text, images, or audio.

- Chunking

- The process of splitting a large document into smaller pieces so each piece can be embedded and retrieved effectively.

- Vector index

- A data structure that stores embeddings and allows fast similarity search over many vectors.

- Similarity metric

- A mathematical rule, such as cosine similarity, used to measure how close two embeddings are.

- Retrieval augmented generation

- A method where a model first retrieves relevant information from a database and then uses it to produce an answer.

Common Mistakes to Avoid

- Using different embedding models for documents and queries, which is wrong because the vectors may live in incompatible spaces and similarity scores become unreliable.

- Making chunks too large or too small, which is wrong because oversized chunks dilute meaning while tiny chunks lose context needed for accurate retrieval.

- Judging retrieval quality only by one example, which is wrong because a pipeline must be tested across many queries using metrics like precision and recall.

- Assuming exact keyword overlap is required, which is wrong because embedding retrieval is designed to capture semantic similarity even when wording changes.

Practice Questions

- 1 A system embeds 1200 document chunks, and a query returns the top 10 results. If 7 of those 10 are relevant, what is the precision of the retrieval?

- 2 Two embedding vectors are a = (1, 2, 2) and b = (2, 1, 2). Compute their dot product.

- 3 A student increases chunk size so each chunk contains several unrelated topics. Explain how this can hurt retrieval quality even if the embedding model is strong.