Tokens Explained

How AI Models Read and Count Text

Related Tools

Related Labs

Related Worksheets

Related Cheat Sheets

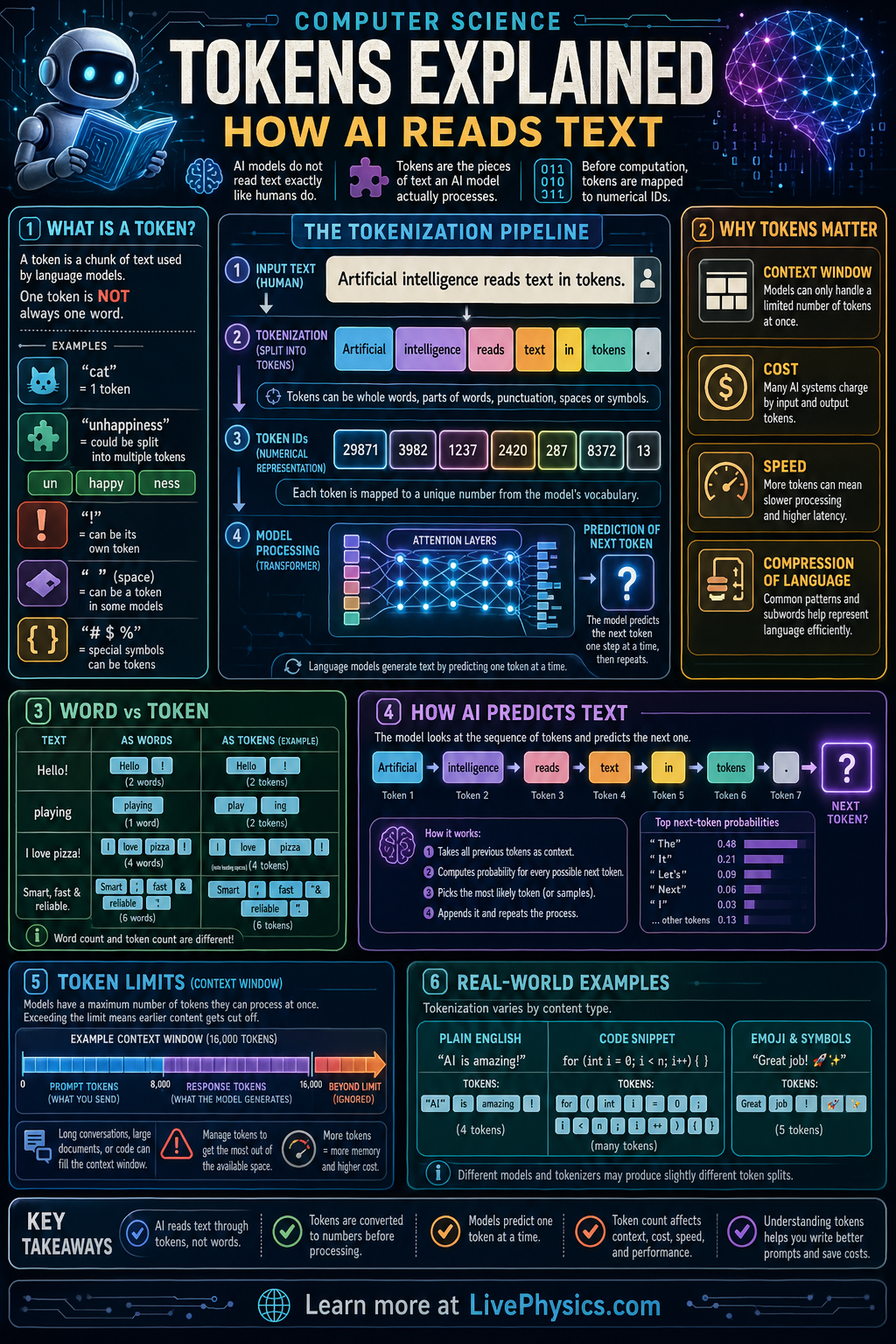

AI language models do not read text as complete ideas the way humans usually do. Instead, they break text into smaller units called tokens and process those units one step at a time. This matters because the number and type of tokens affect speed, memory use, cost, and how well a model handles different languages or unusual text. Understanding tokens helps students see how raw writing becomes something a computer can analyze mathematically.

A tokenizer first splits a sentence into pieces such as words, word parts, punctuation, or special symbols. Each token is then mapped to an integer ID and converted into a numerical vector called an embedding so the model can work with it in high dimensional space. The model uses patterns in these vectors and their order to predict the next token or interpret meaning across a sequence. This is why a short sentence for a human can become many separate computational steps for an AI system.

Key Facts

- A token is a unit of text used by a model, and one sentence may contain many tokens.

- Tokens can be whole words, subwords, punctuation, spaces, or control symbols such as <EOS>.

- Tokenization can look like: "unhappiness!" -> ["un", "happi", "ness", "!"]

- Each token is mapped to an ID, such as token -> integer ID.

- Embeddings convert IDs into vectors: ID i -> e_i in R^d

- Sequence length is often measured in tokens, and total work grows with the number of tokens.

Vocabulary

- Token

- A token is a small piece of text that an AI model processes as one unit.

- Tokenizer

- A tokenizer is the algorithm that splits text into tokens according to a chosen rule set.

- Token ID

- A token ID is the integer number assigned to a specific token in the model's vocabulary.

- Embedding

- An embedding is a numerical vector that represents a token so the model can compare and combine meanings mathematically.

- Context window

- The context window is the maximum number of tokens a model can consider at one time.

Common Mistakes to Avoid

- Assuming one word always equals one token, which is wrong because many words are split into smaller parts and punctuation may become separate tokens.

- Ignoring spaces and symbols, which is wrong because some tokenizers treat spaces, punctuation, or special markers as meaningful units.

- Thinking the model stores meaning directly in raw words, which is wrong because it actually works with token IDs and numerical embeddings.

- Counting sentence length in characters instead of tokens, which is wrong because model limits and costs are usually based on token count, not letter count.

Practice Questions

- 1 A tokenizer splits the text "AI reads text." into the tokens ["AI", "reads", "text", "."]. How many tokens are there, and if each token becomes one ID, how many IDs are produced?

- 2 A model input contains 120 tokens and the output contains 35 tokens. If the system charges by total tokens processed, how many tokens are billed?

- 3 Explain why the word "playing" might be split into more than one token and how that can help an AI model handle unfamiliar words.