What Is RAG?

Retrieval-Augmented Generation - Architecture and Benefits

Related Labs

Related Worksheets

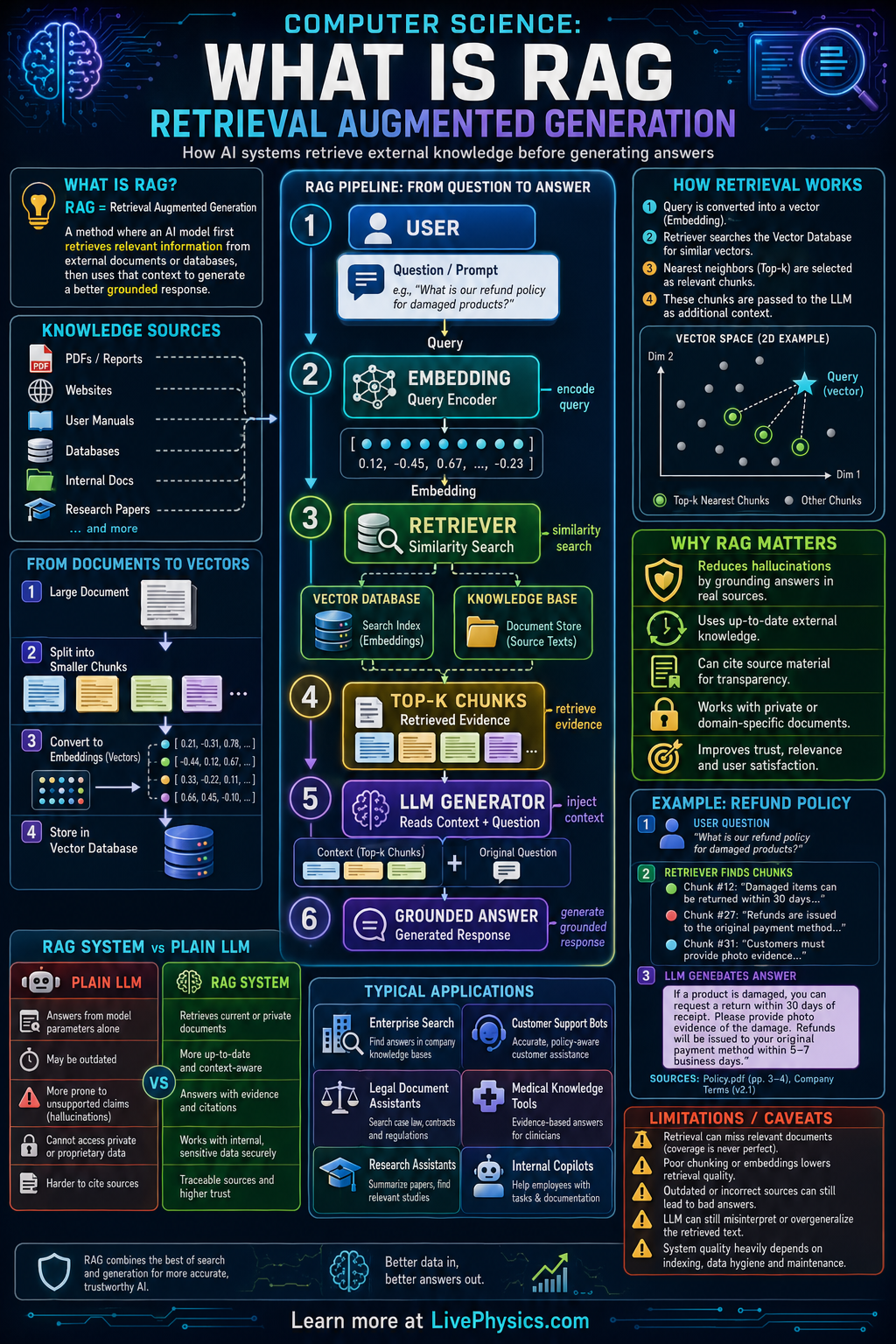

Retrieval Augmented Generation, or RAG, is a method that helps an AI system answer questions using outside information instead of relying only on what was stored during training. It matters because language models can sound confident even when they are wrong, outdated, or missing key facts. By retrieving relevant documents first, RAG can produce answers that are more accurate, current, and grounded in evidence. This makes it useful for search, tutoring, customer support, and question answering over private company data.

In a typical RAG pipeline, a user asks a question, the system converts that question into a numerical representation called an embedding, and then searches a database for similar chunks of text. The retrieved passages are added to the model's prompt so the generator can write an answer based on those sources. The quality of the final answer depends on both retrieval and generation, so chunking, embedding quality, ranking, and prompt design all matter. Many systems also return citations so users can inspect where the answer came from.

Key Facts

- RAG combines retrieval + generation: Answer = LLM(question + retrieved context)

- Text is often split into chunks before indexing so the retriever searches smaller, relevant passages.

- Embeddings map text into vectors, and similarity can be measured with cosine similarity = (A · B) / (|A||B|).

- A vector database stores embeddings and returns the top k most similar chunks for a query.

- Better retrieval often improves answer quality more than simply using a larger language model.

- RAG can reduce hallucinations, but it can still fail if retrieval returns irrelevant, incomplete, or outdated sources.

Vocabulary

- Retrieval Augmented Generation

- A method where an AI model first retrieves relevant information from a data source and then uses it to generate an answer.

- Embedding

- A numerical vector that represents the meaning of text so similar meanings are close together in space.

- Vector database

- A database designed to store embeddings and quickly find items with similar vectors.

- Chunking

- The process of splitting documents into smaller pieces so they can be indexed and retrieved more effectively.

- Hallucination

- An incorrect or unsupported statement generated by an AI model as if it were true.

Common Mistakes to Avoid

- Assuming RAG guarantees truth, because retrieved documents can still be wrong, outdated, or only partly relevant. Students should evaluate source quality and not trust the final answer automatically.

- Using chunks that are too large or too small, because oversized chunks dilute relevance while tiny chunks lose context. Good chunk size balances precision with enough surrounding information.

- Confusing retrieval with generation, because the retriever finds candidate evidence while the language model writes the response. A strong generator cannot fully fix poor retrieval.

- Ignoring ranking and top k selection, because returning too few passages can miss key evidence and too many can overload the prompt with noise. Students should tune how many results are passed to the model.

Practice Questions

- 1 A RAG system retrieves 8 document chunks for each query, and each chunk contains 120 words. How many words of retrieved context are added to the prompt before generation?

- 2 A document collection has 240 articles, and each article is split into 5 chunks for indexing. If the system stores one embedding per chunk, how many embeddings are in the vector database?

- 3 Explain why a RAG system might still produce a bad answer even if the language model itself is powerful. Identify one failure in retrieval and one failure in generation.