Matrix Transformations in 2D

Rotation, Scaling, Reflection, and Shear

Related Tools

Related Labs

Related Worksheets

Related Cheat Sheets

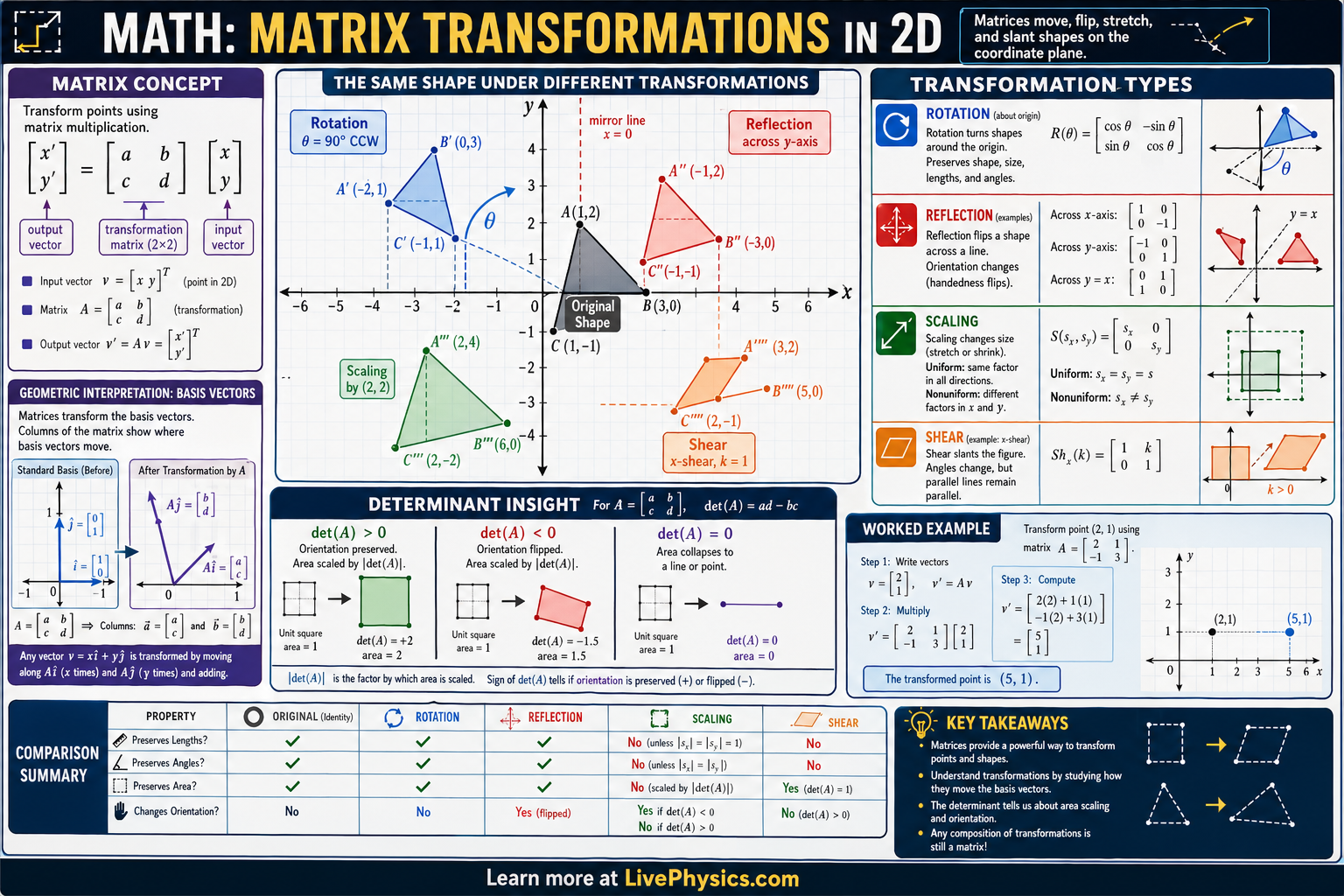

A 2D linear transformation is a function that maps every point in the plane to a new point in a way that preserves lines and the origin. Such transformations can always be represented by a matrix multiplication: . The columns of the matrix tell you where the standard basis vectors and land after the transformation - a key insight for visualizing what a matrix does.

Common 2D transformations include rotation (by angle θ), scaling (stretching or shrinking along axes), reflection (flipping over a line), and shear (tilting one direction while keeping the other fixed). Composing transformations means multiplying their matrices - and order matters because matrix multiplication is not commutative. Linear transformations form the geometric foundation of computer graphics, physics simulations, and machine learning (neural network layers are affine transformations: a linear transformation plus a translation).

Key Facts

- Rotation by θ: [[cos θ, −sin θ], [sin θ, cos θ]] (counterclockwise)

- Scaling: [[sx, 0], [0, sy]] (scales x by sx, y by sy)

- Reflection over x-axis: [[1, 0], [0, −1]]; over y-axis: [[−1, 0], [0, 1]]

- Shear (horizontal): [[1, k], [0, 1]] shifts x by k times y

- Determinant = area scaling factor; means the transformation collapses to a lower dimension

- Composition: applying then (right-most matrix acts first)

Vocabulary

- Linear transformation

- A mapping between vector spaces that preserves vector addition and scalar multiplication; representable by a matrix.

- Determinant

- A scalar value of a square matrix that represents the scaling factor of the area (in 2D) under the transformation; zero determinant means the transformation is singular.

- Basis vector

- A vector in a set that, through linear combinations, can represent any vector in the space; the standard basis in 2D is (1,0) and (0,1).

- Composition

- Applying two transformations in sequence; mathematically represented by matrix multiplication (right-to-left order).

- Eigenvector

- A non-zero vector that is only scaled (not rotated) by a linear transformation; the scaling factor is the corresponding eigenvalue.

Common Mistakes to Avoid

- Reversing matrix multiplication order when composing transformations. Applying rotation then scaling is NOT the same as scaling then rotating. Matrix multiplication is not commutative: AB ≠ BA in general.

- Forgetting that linear transformations always fix the origin. Translations (moving the origin to a new point) are NOT linear transformations - they require homogeneous coordinates or affine notation.

- Confusing the rotation angle direction. The standard rotation matrix [[cos θ, −sin θ], [sin θ, cos θ]] rotates counterclockwise. Clockwise rotation uses −θ (or equivalently swaps the signs of the sin terms).

- Thinking a determinant of zero just means no rotation. Det = 0 means the matrix is singular: it collapses the plane onto a line or point, destroying information. The transformation cannot be undone (no inverse).

Practice Questions

- 1 Write the 2x2 matrix that rotates points 90° counterclockwise. Apply it to the point (3, 1) and verify geometrically.

- 2 A transformation first scales x by 2 and y by 3, then rotates 45°. Write the single matrix representing the composition (in correct order).

- 3 If a transformation matrix has determinant = 4, what happens to the area of a triangle that is transformed by it?