AI Hallucinations Explained

Why AI Makes Things Up and How to Reduce Errors

Related Tools

Related Labs

Related Worksheets

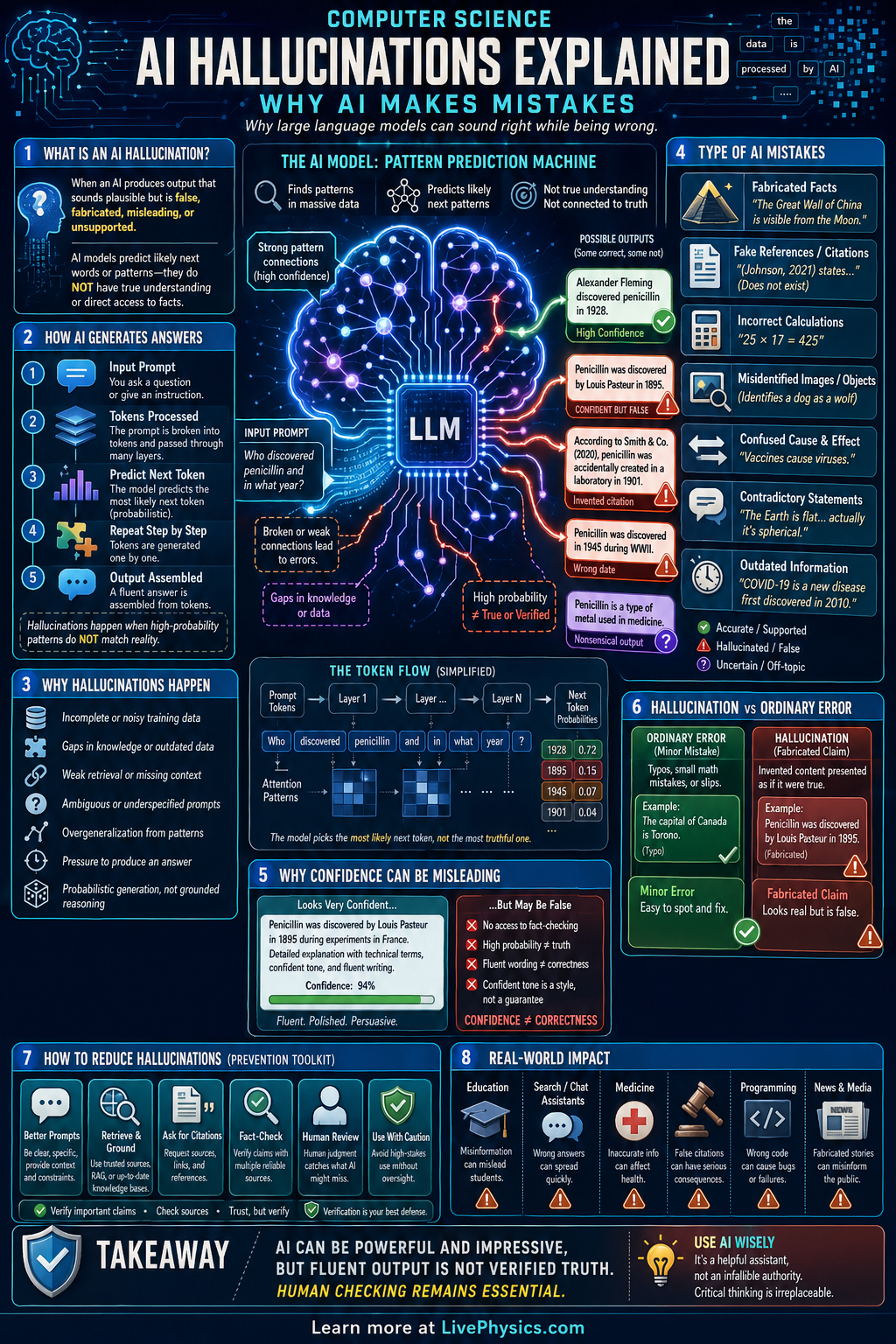

AI hallucinations happen when an artificial intelligence system produces information that sounds confident and fluent but is actually false, unsupported, or made up. This matters because people often trust polished language, especially when the system gives detailed answers quickly. Hallucinations can appear in chatbots, image generators, search assistants, and coding tools. Understanding why they happen helps users check results instead of accepting every answer as correct.

These mistakes come from how many AI systems are built and trained. A language model predicts likely next words from patterns in huge datasets, but it does not automatically verify facts against reality unless extra tools are added. If the prompt is unclear, the training data is incomplete, or the model is pushed beyond what it knows, it may fill gaps with plausible sounding guesses. Better prompting, retrieval systems, fact checking, and human oversight can reduce hallucinations, but they do not remove the risk completely.

Key Facts

- A language model often works by estimating P(next token | previous tokens).

- Hallucination means the output is fluent but not grounded in reliable evidence or source data.

- More confidence in wording does not mean higher accuracy.

- If an AI system retrieves outside information, answer quality depends on both retrieval quality and model reasoning.

- Error rate = (number of incorrect outputs) / (total outputs tested).

- Reducing hallucinations often combines better prompts, better data, retrieval tools, and human review.

Vocabulary

- Hallucination

- An AI output that contains false, invented, or unsupported information presented as if it were correct.

- Language model

- A computer system trained to predict and generate text by learning patterns from large amounts of language data.

- Training data

- The collection of text, images, or other examples used to teach an AI system during development.

- Grounding

- Linking an AI response to trusted sources, evidence, or real input data so the answer is better supported.

- Retrieval augmented generation

- A method where an AI system first fetches relevant documents or facts and then uses them to help generate an answer.

Common Mistakes to Avoid

- Assuming a detailed answer must be correct, because fluent wording can hide unsupported claims. Always check important facts against reliable sources.

- Using vague prompts, because missing context forces the model to guess what you want. Add constraints, examples, and the exact task to reduce errors.

- Treating AI as a source instead of a tool, because the model may summarize patterns without proving them. Ask for citations and verify whether those sources are real and relevant.

- Ignoring domain limits, because a model may perform well in common topics but fail in specialized, recent, or ambiguous cases. Use expert review for medicine, law, finance, and safety critical tasks.

Practice Questions

- 1 A student tests an AI on 50 factual questions and finds 8 incorrect answers. Calculate the error rate as a decimal and as a percent.

- 2 An AI assistant gives 120 answers in one day. If 15% are hallucinations, how many answers are hallucinations and how many are not?

- 3 Explain why adding access to a trusted database can reduce hallucinations but still not guarantee perfect accuracy.