GPT vs Claude vs Gemini

Model Comparison - Strengths, Context, and Use Cases

Related Tools

Related Labs

Related Worksheets

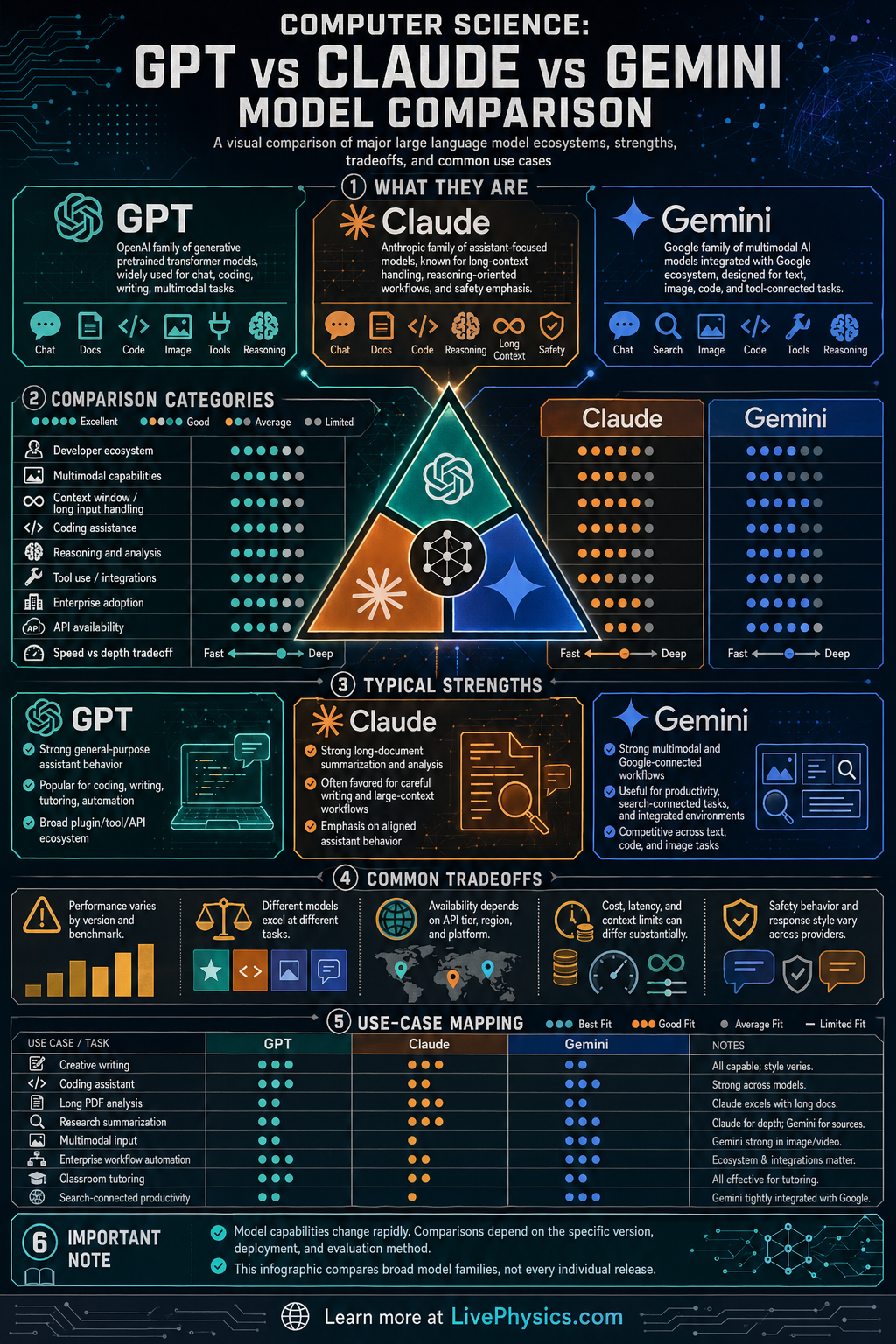

Large language models are a major branch of modern artificial intelligence, and GPT, Claude, and Gemini are three of the best known model families. They are all designed to process natural language, generate text, answer questions, and assist with tasks like coding, summarization, and analysis. Comparing them helps students understand how AI systems can be similar in core design yet differ in training goals, product integration, and practical strengths. This matters because model choice affects accuracy, speed, safety behavior, and how well a tool fits a real task.

All three families are based on transformer-style neural networks that learn statistical patterns from massive datasets. In simple terms, they predict likely next tokens, but their final behavior also depends on fine-tuning, reinforcement learning, tool use, and system-level safety rules. GPT is strongly associated with broad conversational performance and developer ecosystems, Claude is often noted for long-context reasoning and careful response style, and Gemini is closely tied to multimodal processing and integration with Google's products. A good comparison focuses on architecture ideas, context handling, multimodal ability, ecosystem support, and the tradeoff between capability, cost, and reliability.

Key Facts

- All three model families are large language models built on transformer-based sequence processing.

- A language model estimates token probabilities, written as P(token_t | token_1, token_2, ..., token_t-1).

- Self-attention compares tokens using Attention(Q,K,V) = softmax(QK^T / sqrt(d_k))V.

- Model performance depends on pretraining data, fine-tuning, alignment methods, and tool integration, not just parameter count.

- Multimodal models can process more than text, such as text + image, and sometimes audio or video inputs.

- Useful comparison categories include context window, latency, benchmark scores, API features, safety behavior, and cost per token.

Vocabulary

- Large language model

- A large language model is an AI system trained on huge amounts of text to predict and generate language.

- Transformer

- A transformer is a neural network architecture that uses attention to model relationships between tokens in a sequence.

- Token

- A token is a unit of text, such as part of a word, a whole word, or punctuation, that a model processes.

- Context window

- The context window is the amount of input text or other data the model can consider at one time.

- Multimodal

- Multimodal describes a model that can work with multiple data types such as text, images, audio, or video.

Common Mistakes to Avoid

- Assuming the biggest model is always the best, because real performance also depends on fine-tuning, tools, safety settings, and the specific task being tested.

- Treating benchmark scores as the whole story, because benchmarks may not reflect real classroom, coding, or business use where reliability and speed matter.

- Confusing context window with memory, because a large context window means the model can read more input at once, not that it truly remembers past conversations forever.

- Thinking all three models work the same way in products, because API access, multimodal features, pricing, and integration with external tools can differ a lot.

Practice Questions

- 1 A model processes 1200 input tokens and generates 300 output tokens. If the API price is 0.006 per 1000 output tokens, what is the total cost of the request?

- 2 A benchmark gives GPT a score of 88, Claude a score of 84, and Gemini a score of 92. What is the average score, and how many points above the average is Gemini?

- 3 A student needs a model for analyzing a long document, checking reasoning carefully, and comparing several sections at once. Which comparison feature should matter most, and why?