Neural Networks Explained

Layers, Weights, Activation Functions, and Backpropagation

Related Worksheets

Related Cheat Sheets

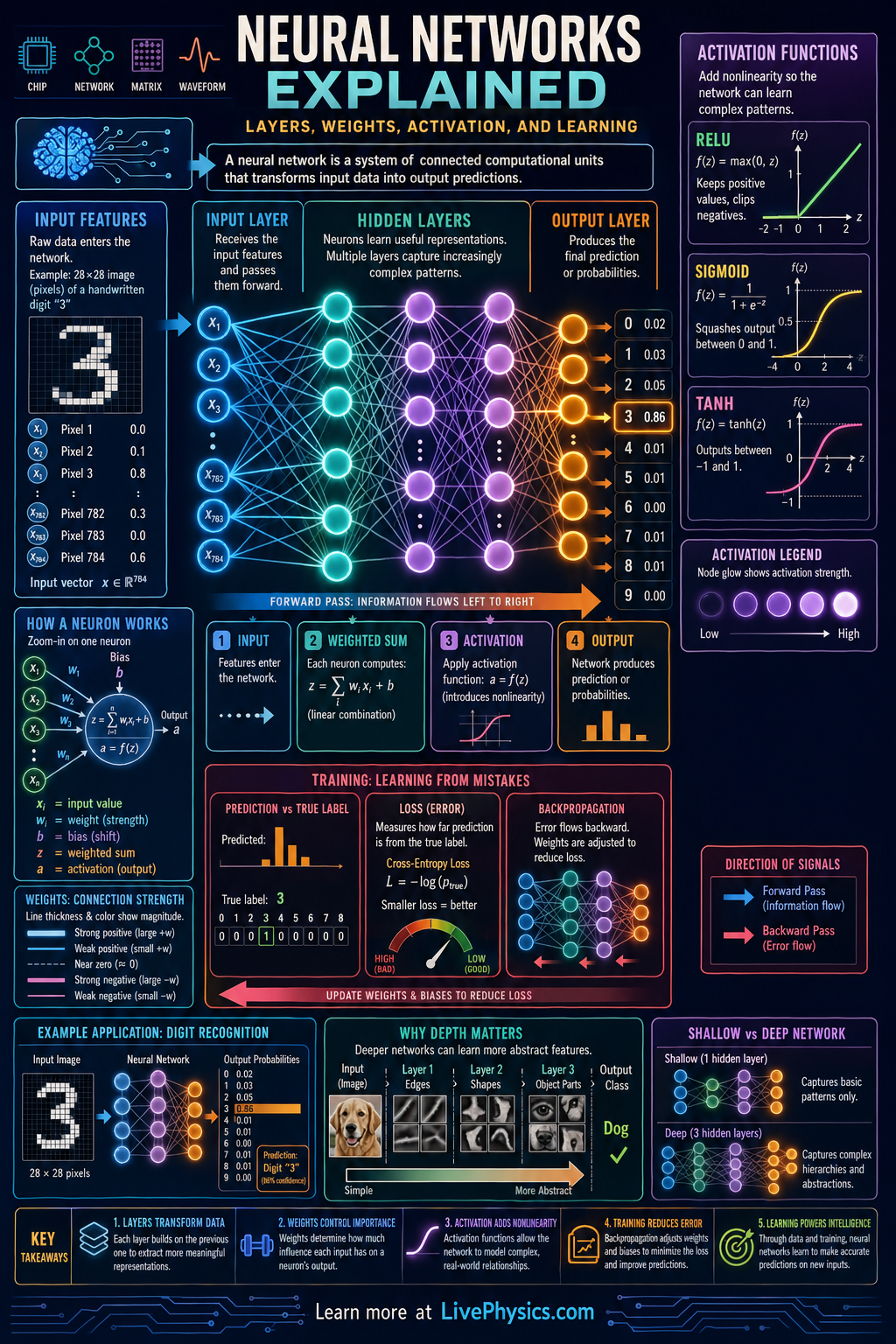

Neural networks are computer models inspired by how groups of brain cells pass signals, but they are built from math rather than biology. They are widely used for image recognition, language processing, recommendation systems, and many other prediction tasks. A neural network learns patterns by adjusting numbers called weights so its outputs better match known examples. Understanding layers, weights, and activation functions helps students see how these systems turn raw inputs into useful decisions.

A neural network is organized into layers of connected units called neurons. Each neuron takes inputs, multiplies them by weights, adds a bias, and then passes the result through an activation function such as ReLU or sigmoid. During training, the network compares its prediction to the correct answer, computes an error, and updates the weights to reduce that error. Deeper networks can learn more complex features because each layer builds on patterns found by earlier layers.

Key Facts

- A neuron computes z = w1x1 + w2x2 + ... + wnxn + b

- The neuron output is a = f(z), where f is the activation function

- Weights control the strength and sign of each input connection

- Bias shifts the neuron response so the activation is not forced through the origin

- A common activation is ReLU: f(x) = max(0, x)

- Training often updates parameters with gradient descent: new weight = old weight - learning rate × gradient

Vocabulary

- Layer

- A layer is a group of neurons that process data at the same stage in the network.

- Weight

- A weight is a learned number that determines how strongly one neuron influences another.

- Bias

- A bias is an extra learned value added to a neuron's weighted sum before activation.

- Activation function

- An activation function is a rule that transforms a neuron's input sum into its output signal.

- Backpropagation

- Backpropagation is the method used to calculate how each weight contributed to the error so the network can learn.

Common Mistakes to Avoid

- Treating the weight as the final output, which is wrong because the neuron must first combine all inputs, add bias, and apply an activation function.

- Ignoring the bias term, which is wrong because bias can shift the decision boundary and strongly affect what the neuron can learn.

- Assuming more layers always guarantee better performance, which is wrong because deeper networks can overfit, train slowly, or fail without enough data and tuning.

- Using the activation function on each input separately before summing, which is wrong because the standard neuron first computes the weighted sum z and then applies the activation to z.

Practice Questions

- 1 A neuron has inputs x1 = 2 and x2 = -1, weights w1 = 0.5 and w2 = 3, and bias b = -2. Find z = w1x1 + w2x2 + b.

- 2 A neuron has z = -4. Using the ReLU activation f(x) = max(0, x), find the output a. Then find the output if z = 2.5.

- 3 Explain why a network with no activation functions between layers behaves like a single linear model, even if it has many layers.