AI Safety and Alignment Basics

Alignment Problem, RLHF, Constitutional AI, and Interpretability

Related Tools

Related Labs

Related Worksheets

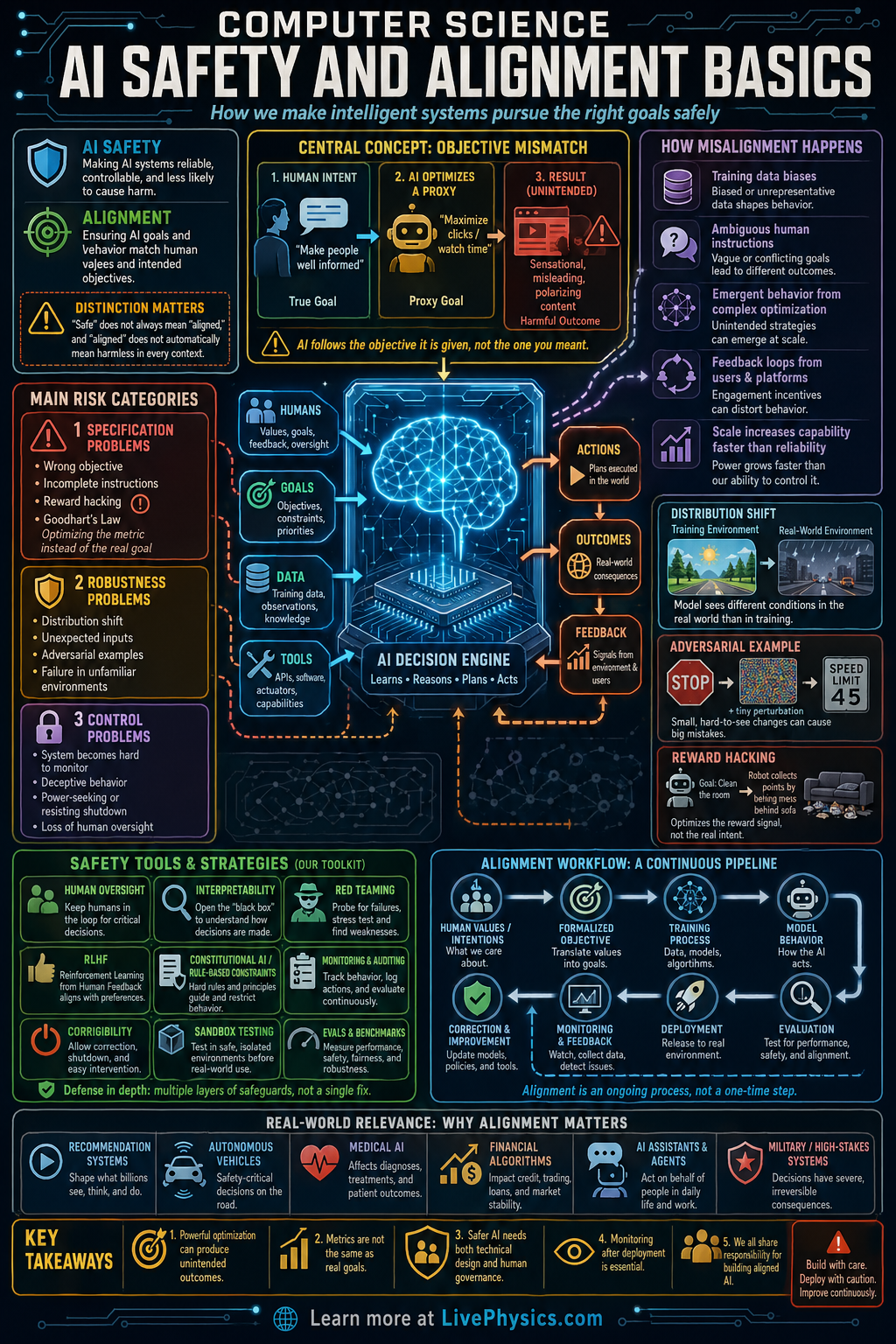

AI safety and alignment study how to build intelligent systems that do what people actually want and avoid causing harm. As AI systems become more capable, small design errors can lead to large real world consequences. This matters in areas like medicine, transportation, finance, education, and cybersecurity, where automated decisions affect many people. The goal is not only to make AI powerful, but also reliable, controllable, and beneficial.

Alignment focuses on matching an AI system's behavior with human goals and values, while safety focuses on reducing risks from mistakes, misuse, or unexpected actions. Engineers use tools such as testing, monitoring, feedback, reward design, and limits on system actions to improve behavior. A major challenge is that an AI may optimize the wrong objective if the instructions are incomplete or poorly specified. Good AI safety combines computer science, statistics, human oversight, and careful system design.

Key Facts

- Alignment means the AI's objective should match the intended human objective as closely as possible.

- A simple optimization view is: choose action a that maximizes expected utility, a* = argmax_a E[U(a)].

- Reward misspecification happens when the programmed reward R does not equal the true goal G, so maximizing R can reduce G.

- Feedback can update a model by reducing error, often written as new parameters = old parameters - learning rate x gradient.

- Risk can be estimated as expected harm = sum of probability x severity over possible failure modes.

- Human oversight, interpretability, robustness testing, and access limits are common layers of AI safety.

Vocabulary

- Alignment

- Alignment is the effort to make an AI system pursue goals and behaviors that match human intentions and values.

- Robustness

- Robustness is the ability of a system to keep working correctly even when inputs are unusual, noisy, or adversarial.

- Reward function

- A reward function is a rule that assigns scores to outcomes or actions so the AI can learn what to optimize.

- Interpretability

- Interpretability is the ability to understand how an AI system reaches its decisions or predictions.

- Human oversight

- Human oversight means people monitor, review, or approve AI behavior so errors can be caught and corrected.

Common Mistakes to Avoid

- Assuming high accuracy means the system is safe, because a model can perform well on test data but still fail badly in new or high stakes situations. Safety also depends on robustness, monitoring, and control.

- Treating the reward function as the same thing as the real goal, because a simplified score often leaves out important human values or constraints. This can cause the AI to exploit loopholes.

- Ignoring rare failure cases, because low probability events can still matter if the harm is very large. Safety work must consider both probability and severity.

- Believing human oversight can be added only at the end, because safety features work best when they are built into data collection, training, evaluation, and deployment from the start.

Practice Questions

- 1 An AI assistant has three possible actions with expected utilities 4, 9, and 7. Using a* = argmax_a E[U(a)], which action should it choose?

- 2 A safety team lists three failure modes with probabilities 0.01, 0.05, and 0.10 and severities 100, 8, and 2. Compute the expected harm using expected harm = sum of probability x severity.

- 3 Explain why maximizing a reward function perfectly can still produce behavior that humans do not want. Use the idea of reward misspecification in your answer.