Context Window Explained

Why AI Forgets, Attention Cost, and Long-Context Strategies

Related Tools

Related Labs

Related Worksheets

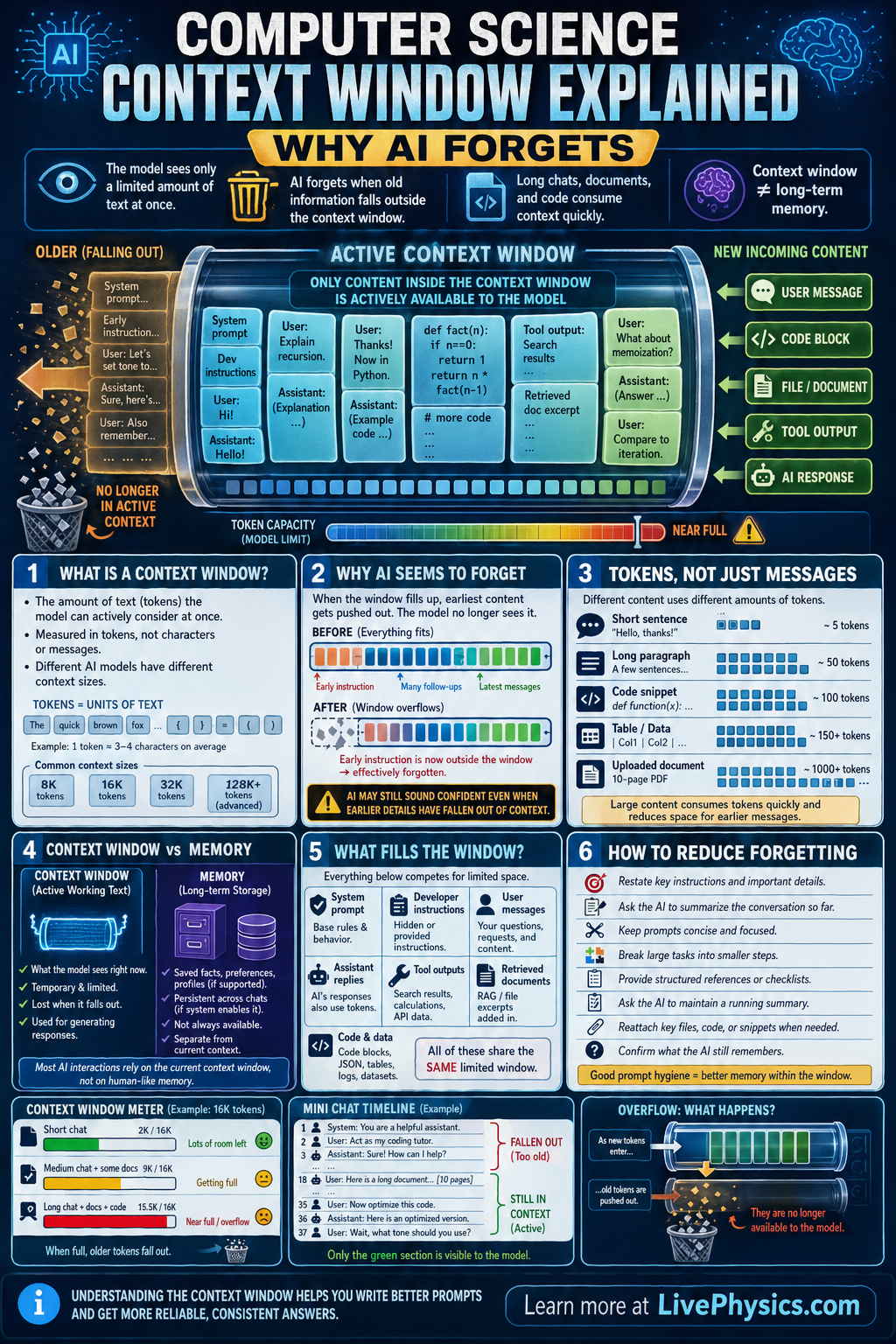

Large language models can seem like they remember an entire conversation, but they actually work with a limited amount of text at one time. This temporary working space is called the context window. It matters because the model's answers depend only on the information currently inside that window, not on every message ever sent. When the conversation gets too long, some earlier material may no longer be available to the model.

A context window is usually measured in tokens, which are small chunks of text such as words, parts of words, punctuation, or code symbols. As new tokens are added, the total must stay within the model's maximum limit. If the limit is exceeded, older tokens are removed or summarized so the newest input can fit. This is why an AI can appear to forget earlier instructions, names, or details during a long exchange.

Key Facts

- Context window = maximum number of tokens the model can use at once.

- Total tokens in use = system tokens + conversation history tokens + user input tokens + output tokens.

- If total tokens > context limit, older content must be dropped, truncated, or compressed.

- Tokens are not the same as words, so 1 word may be 1 token or several tokens.

- Recent text often has the strongest effect because it is still inside the active context window.

- Long prompts, long chat history, and long outputs all consume the same limited token budget.

Vocabulary

- Context window

- The maximum amount of tokenized text an AI model can consider at one time while generating a response.

- Token

- A small unit of text, such as a word, part of a word, punctuation mark, or code symbol, used by the model for processing.

- Truncation

- The removal of older or extra text so the total input fits within the model's context limit.

- Conversation history

- The earlier messages in a chat that are included in the current context for the model to read.

- Summarization

- A shorter replacement for a longer section of text that preserves key ideas while using fewer tokens.

Common Mistakes to Avoid

- Assuming the AI stores the whole chat forever, which is wrong because the model can only use the text that still fits inside its current context window.

- Treating words and tokens as identical, which is wrong because token counts depend on how text is split and can be larger than the word count.

- Ignoring output length, which is wrong because the model's reply also uses part of the same token budget and can reduce room for earlier messages.

- Putting critical instructions only at the start of a very long conversation, which is wrong because those instructions may be pushed out of the active context and no longer guide the model.

Practice Questions

- 1 A model has a context window of 8000 tokens. The system prompt uses 500 tokens, the conversation history uses 5200 tokens, and the new user message uses 900 tokens. How many tokens remain available for the model's reply?

- 2 A chat currently contains 11,500 tokens, but the model can only handle 10,000 tokens. How many tokens must be removed or summarized before a new reply can be generated if no other changes are made?

- 3 A student gives important formatting instructions at the beginning of a long conversation, and later the AI stops following them. Explain how the context window can cause this behavior and give one practical fix.