Open Source vs Closed AI Models

Trade-offs in Access, Cost, Safety, and Customization

Related Tools

Related Labs

Related Worksheets

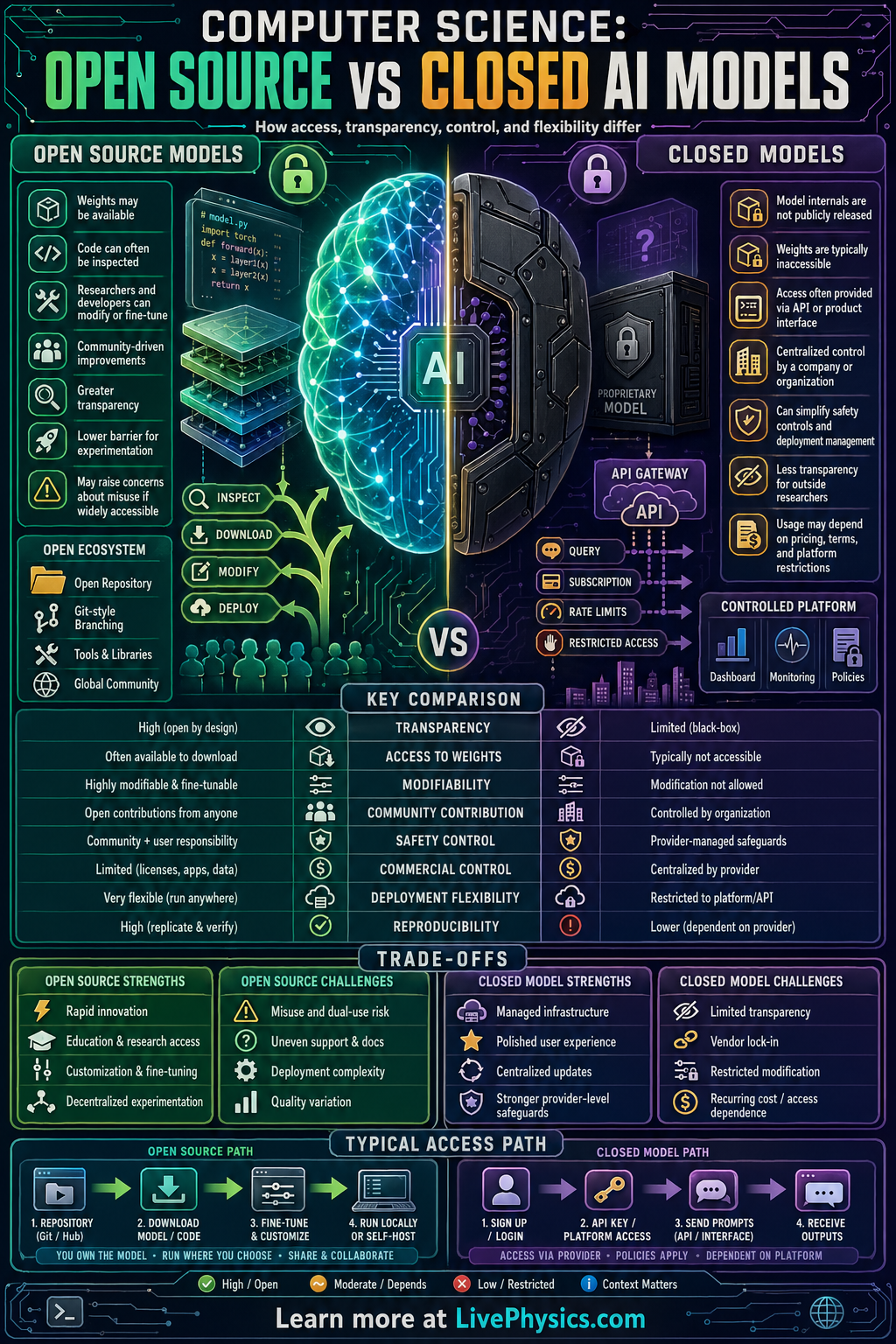

AI models can be shared in very different ways, and that choice affects who can study, use, and improve them. Open source AI models make important parts of the system available to the public, such as model weights, code, or training details. Closed AI models keep those parts private and usually provide access only through an app or API. Understanding this difference matters because it shapes innovation, safety, cost, and control.

In practice, open source models let researchers and developers inspect behavior, fine tune systems, and run them on their own hardware. Closed models often offer polished performance, centralized updates, and tighter control over misuse, but they limit transparency and customization. Neither approach is automatically better in every situation because each involves tradeoffs between openness, security, speed of development, and business strategy. Comparing the two helps students see how technical design choices connect to ethics, economics, and real world computing.

Key Facts

- Open source access often includes model weights + source code + documentation.

- Closed model access is commonly limited to an API, so user control is approximately API permissions only.

- Fine tuning changes a base model into a task specific model: new_model = base_model + task_training.

- Inference cost can be estimated as total_cost = cost_per_request x number_of_requests.

- If a model runs locally, latency is approximately processing_time + local_input_output_time.

- Transparency is generally higher when architecture, data sources, and evaluation methods are publicly documented.

Vocabulary

- Open source model

- An AI model released with publicly available components such as code, weights, or documentation so others can inspect and modify it.

- Closed model

- An AI model whose internal details are kept private and is usually accessed only through a company controlled service.

- Model weights

- The learned numerical parameters inside a neural network that determine how it produces outputs.

- API

- An application programming interface is a set of rules that lets one program send requests to another service.

- Fine tuning

- Fine tuning is additional training on a smaller dataset to adapt a general model to a specific task.

Common Mistakes to Avoid

- Assuming open source means completely free to use, which is wrong because some open models still have license limits, hardware costs, or usage restrictions.

- Assuming closed models are always better, which is wrong because performance depends on the task and open models can be highly competitive after fine tuning.

- Confusing source code with model weights, which is wrong because code shows how the model runs while weights store what the model has learned.

- Thinking transparency guarantees safety, which is wrong because public access can help auditing but does not automatically prevent harmful outputs or misuse.

Practice Questions

- 1 A company makes 25000 API calls to a closed model at $0.002 per request. Use total_cost = cost_per_request x number_of_requests to find the total inference cost.

- 2 A student runs an open model locally. If processing time is 0.8 s and local input output time is 0.2 s, use latency = processing_time + local_input_output_time to find the total latency per request.

- 3 A hospital needs strong privacy control, local deployment, and the ability to inspect model behavior. Based on these needs, explain whether an open source or closed model is more suitable and give two reasons.