How LLMs Are Trained

Pretraining, Fine-Tuning, and RLHF

Related Tools

Related Labs

Related Worksheets

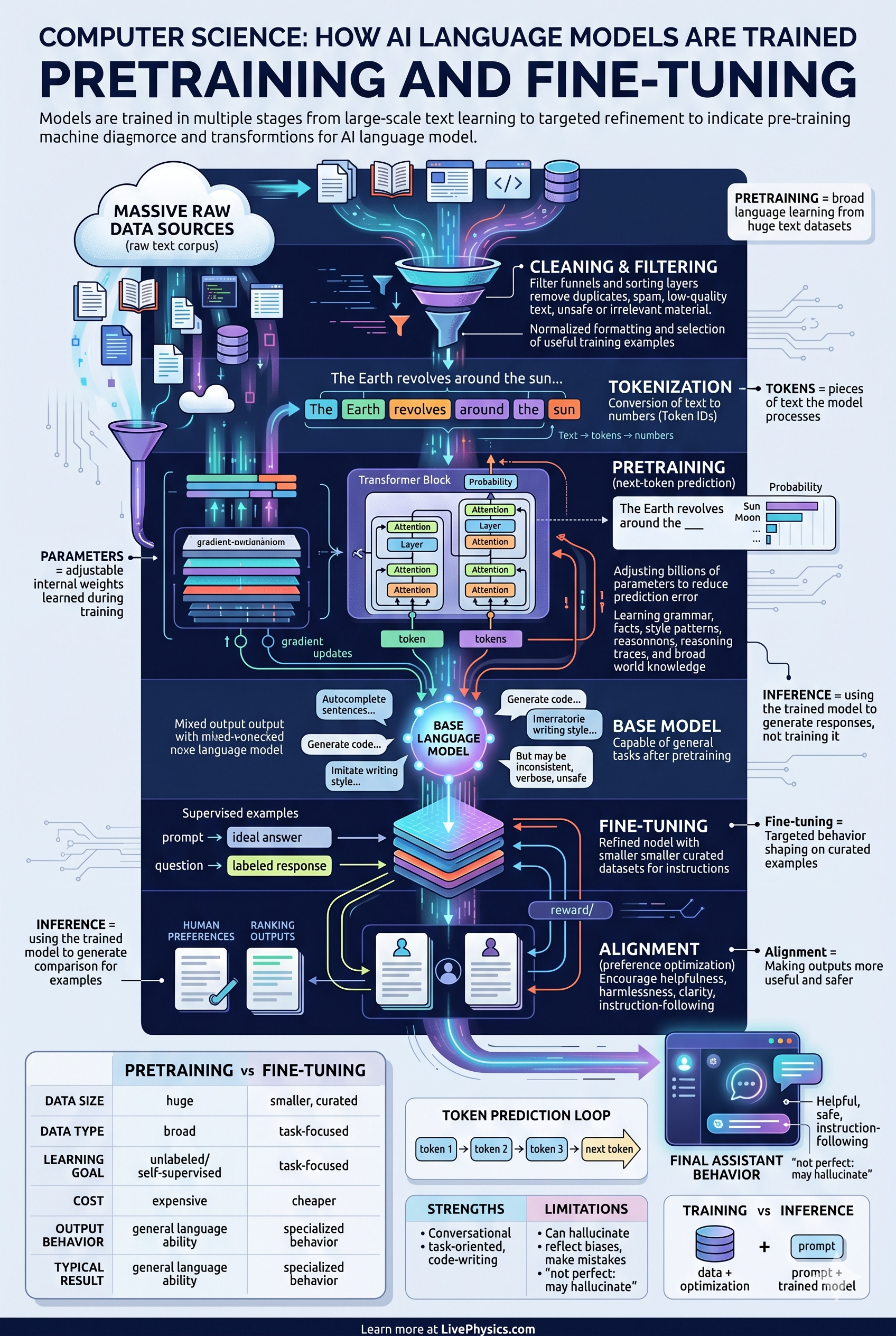

Training a large language model happens in three distinct phases. Pretraining exposes the model to massive amounts of text and teaches it to predict the next token given what came before. The result is a base model that can complete text fluently but does not yet follow instructions or behave safely. Fine-tuning then adapts the base model on curated examples of desired behavior, making it more useful for specific tasks. The final phase, Reinforcement Learning from Human Feedback (RLHF), uses human rankings to train a reward model, then uses that reward signal to further shape the LLM through reinforcement learning so its outputs align with human preferences.

Understanding these stages clarifies why models behave differently from each other. Two models with the same architecture can produce very different outputs depending on their pretraining data, fine-tuning examples, and whether RLHF was applied. It also explains common behaviors: base models are verbose and prone to continuing text in unexpected directions, while RLHF-tuned assistants tend to give concise, helpful answers and decline problematic requests.

Key Facts

- Pretraining: model learns to predict the next token from internet-scale text corpora.

- Objective: minimize cross-entropy loss - P(next token | all previous tokens).

- Base model: fluent but not instruction-following; useful for text completion only.

- Supervised fine-tuning (SFT): further training on curated prompt-response pairs.

- Reward model: trained on human comparisons (A is better than B) to score outputs.

- RLHF: uses the reward model signal to update the policy via PPO or similar RL algorithm.

- Constitutional AI and DPO are alternative alignment techniques to RLHF.

Vocabulary

- Pretraining

- The initial large-scale training phase where the model learns language structure from raw text by predicting the next token.

- Base model

- A model that has been pretrained but not yet fine-tuned or aligned; it completes text but does not follow instructions.

- Fine-tuning

- Additional training on a smaller, curated dataset to adapt the base model toward a specific behavior or task.

- RLHF

- Reinforcement Learning from Human Feedback - a technique that uses human preference rankings to train a reward model used to improve the LLM.

- Reward model

- A separate neural network trained on human comparisons that scores how good an LLM output is.

Common Mistakes to Avoid

- Thinking pretraining and fine-tuning are the same step - they are different phases with different objectives, dataset sizes, and compute requirements.

- Assuming a larger base model always gives better fine-tuned results - data quality and fine-tuning process matter at least as much as model size.

- Confusing RLHF with supervised learning - RLHF involves a reward signal and a policy update loop, not direct label supervision.

- Believing fine-tuned models have memorized all knowledge - factual knowledge mostly comes from pretraining, not fine-tuning.

Practice Questions

- 1 Explain in your own words what happens during pretraining and why the result is called a base model.

- 2 Why is a base model generally not used directly as an assistant, and what phases address this?

- 3 Describe how a reward model is trained and how it is used in RLHF.