Data Privacy in AI Systems

Training Data, Memorization, Inference Privacy, and Regulations

Related Tools

Related Labs

Related Worksheets

Related Cheat Sheets

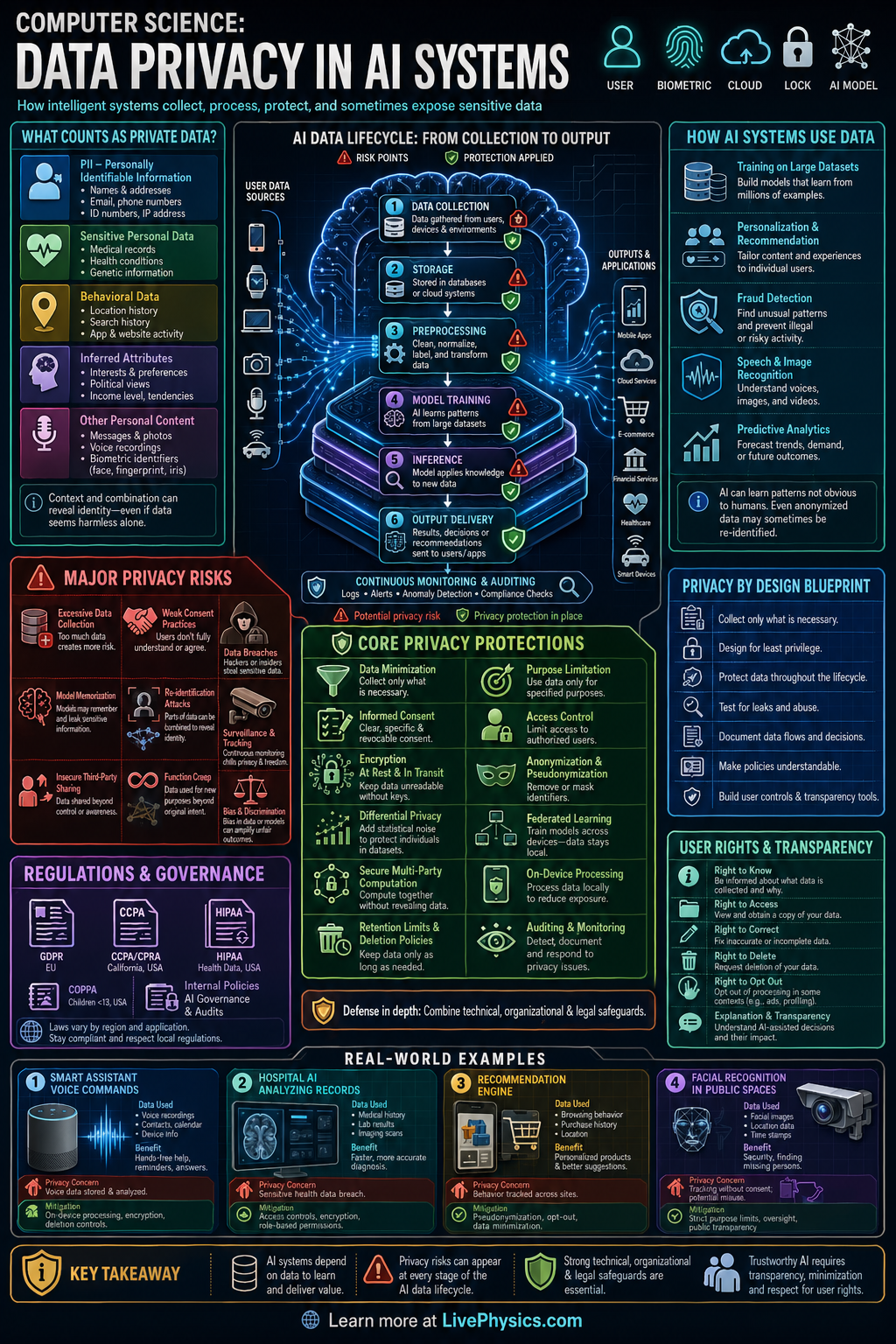

Data privacy in AI systems is the practice of protecting personal and sensitive information while still allowing models to learn from data. It matters because AI often uses large datasets that may include names, locations, health records, messages, or behavior patterns. If privacy is weak, people can be identified, tracked, or harmed even when the system seems useful. Strong privacy design helps build trust, meet legal requirements, and reduce security risks.

AI privacy works through a combination of technical methods and policy controls. Data can be minimized, encrypted, anonymized, masked, or processed with methods such as differential privacy and federated learning. Access controls, audit logs, and consent rules also limit who can use data and for what purpose. A good AI system protects data during collection, storage, training, deployment, and deletion.

Key Facts

- Data minimization means collecting only the data needed for a specific task.

- Encryption protects stored or transmitted data; a simple idea is ciphertext = Encrypt(plaintext, key).

- Differential privacy adds noise so one person's data has limited effect; a common form is M(D) approx M(D').

- Federated learning keeps raw data on local devices and sends model updates instead of full datasets.

- Re-identification risk can remain after anonymization if multiple datasets are linked together.

- Privacy risk often grows with more access, longer retention time, and weaker governance controls.

Vocabulary

- Personal data

- Any information that can identify a person directly or indirectly, such as a name, ID number, or location history.

- Anonymization

- The process of removing identifying details so data cannot reasonably be linked back to a specific person.

- Differential privacy

- A privacy method that adds carefully chosen randomness to data or results to hide the contribution of any one individual.

- Federated learning

- A machine learning approach where models are trained across many devices or servers without moving raw user data to one central place.

- Access control

- Rules and tools that determine which users or systems are allowed to view or use certain data.

Common Mistakes to Avoid

- Assuming anonymized data is always safe, because linked datasets can sometimes reveal identities again. Students should remember that removing names alone does not eliminate privacy risk.

- Treating encryption as complete privacy protection, because encrypted data can still be exposed after decryption or through poor key management. Privacy also depends on access limits, retention policies, and secure system design.

- Collecting extra data just in case it becomes useful later, because unnecessary data increases legal, ethical, and security risk. Good privacy practice starts with collecting the minimum needed.

- Ignoring the full data lifecycle, because privacy problems can happen during collection, storage, training, sharing, or deletion. Students should evaluate protection at every stage of the AI pipeline.

Practice Questions

- 1 A company collects 12 user fields for an AI model, but analysis shows only 5 fields are needed for accurate predictions. By what percentage can the collected fields be reduced if the company follows data minimization?

- 2 An AI team stores 8000 user records. After applying a retention policy, 35% of the records are deleted. How many records remain?

- 3 A hospital wants to train an AI model on patient devices without sending raw medical records to a central server. Explain why federated learning may improve privacy and name one privacy risk that could still remain.